In this part 1 of this blog series, we will introduce the “Data for Good” solution motivation and its core components.

Nonprofits typically work to create awareness about the rights of the distressed and deserted sections of the society. Quite often unfortunately due to lack of resources and proper guidance, they fail to reach their true potential.

The modern world creates and has access to large swaths of data, which can be potentially harnessed to gain insights. Data is one of their critical assets, which when combined with technology can provide critical and valuable insights. Nonprofits across the world are slowly realizing the power of data to improve their operational efficiency, cost management, donor outreach, and planning for the future.

There is significant potential for the usage of data analytics combined with machine learning to harness the power of data to benefit nonprofits. Data analytics is a critical tool that can be used for discovering, diagnosing and deploying models that can be used by nonprofits to better serve their community. There are many examples of nonprofits that are usually averse to data-driven decisions leveraging data for good use.

During the 2019 VMworld, VMware CEO Pat Gelsinger asked the question: Is tech good or bad?,” “The answer is generally neutral; it’s neither good or bad, but it’s often how we shape it and will technology shape the world that we want to live in, or will it create a world that we’re afraid to live in? I sincerely and equally believe that technology has the opportunity to expand the life of every human on the planet and eradicate diseases that have plagued mankind … to give modern education to every child on the planet … to lift the remaining 10% of the planet out of poverty, reverse the implications of climate change,” he continued.

Technology holds a lot of potential to be a force for good. Data is one of the key elements that can be combined with technology as a force for good.

Data for Good Initiative:

Contents

The data for good initiative was inspired by Pat Gelsinger (VMware CEO) “Tech as a force for Good”. The goal was to leverage the VMware Cloud Solutions lab resources for Good. Can we build a solution that leverages VMware Cloud Foundation, Tanzu and the modern hardware available for the VCSLAB to serve the technology needs of nonprofits?

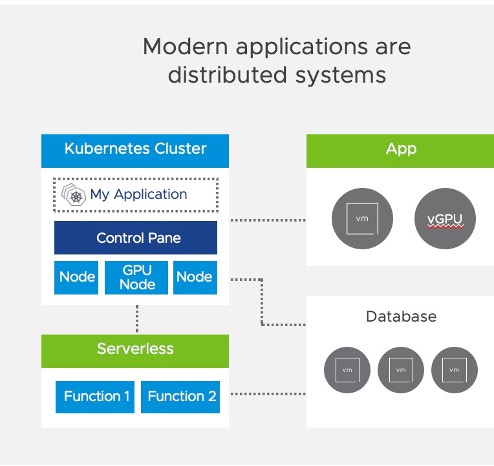

Demands of Modern Applications

Applications are essential…and modern applications are scaling at rates never experienced before. IDC predicts that over 500 million apps using cloud native methodologies over next 5 years, which is more than was developed in past 40 year combined.

But the applications are become much more complex and diverse. As a result, application innovation is driving a need for infrastructure modernization. Modern apps require infrastructures that support all of their components. Without an adaptive infrastructure, developers and IT operators are often at odds and struggle to provide the services that apps require.

Developers essentially expect the infrastructure to just work with their cloud native applications. IT admins are using software-defined technologies to modernize their infrastructure to make it more agile for cloud native developers, while ensuring security, stability and consistency across environments and across clouds, while minimizing their security exposure. This is where many organizations struggle – how can you meet the needs of the fast-paced developers and DevOps teams while ensuring that infrastructure can be agile, secure and stable?

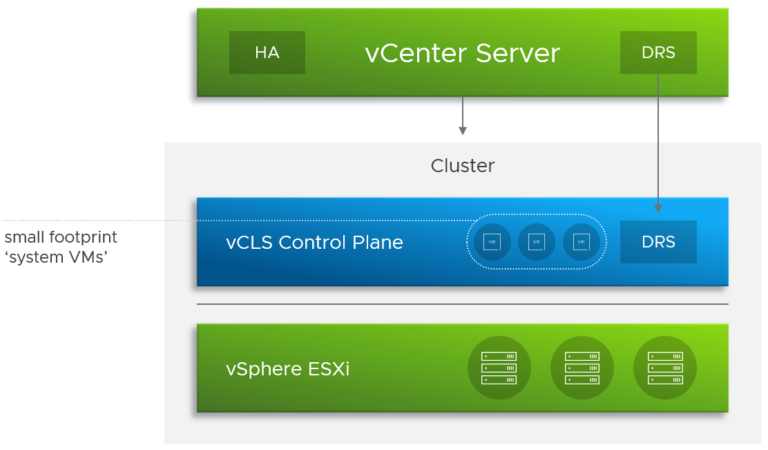

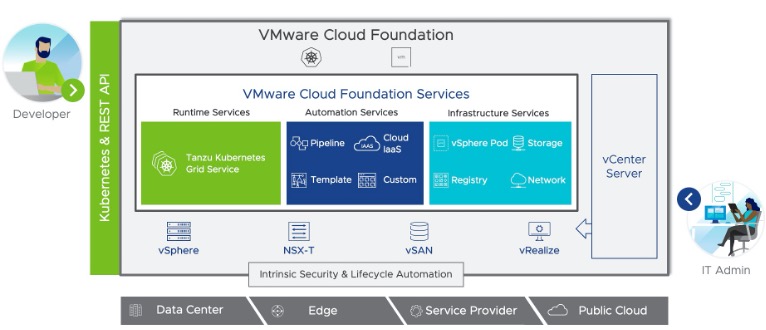

VMware Cloud Foundation with Tanzu:

VMware Cloud Foundation with Tanzu delivers hyper-speed Kubernetes that provides agility, flexibility and security for modern apps. VMware Tanzu delivers the infrastructure, and services to meet changing business needs to rapidly deploy new applications. VCF provides consistent infrastructure and operations with cloud agility, scale and simplicity.

Figure 2: VCF with Tanzu brings together Developers and VI admins

VMware Cloud Foundation with Tanzu is a Hybrid Cloud Platform that accelerates development of modern applications that automates the deployment and lifecycle management of complex Kubernetes environments.

- IT admins have complete visibility and control of virtualized compute, network and storage infrastructure resources through VCF.

- Software defined compute, storage and networking with vSphere, NSX-T and vSAN/VVOL provides ease of deployment and automation.

- Developers have frictionless access to Kubernetes environments and infrastructure resources through VCF Services.

- VMware Cloud Foundation provides runtime services automation services and infrastructure Services, all delivered via Kubernetes and RESTful APIs

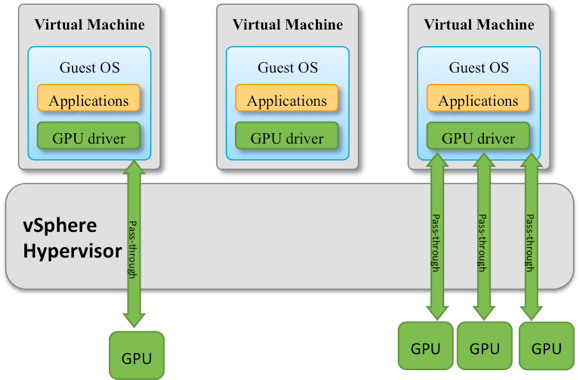

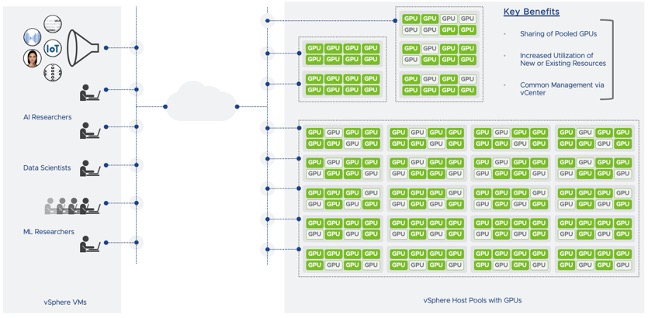

With the impending end to Moore’s law, the spark that is fueling the current revolution in deep learning is having enough compute horsepower to train neural-network based models in a reasonable amount of time. The needed compute horsepower is derived largely from GPUs, which NVIDIA began optimizing for deep learning since 2012. A lot of the machine learning and AI related work relies on processing large blocks of data, which makes GPUs a good fit for ML tasks. Most of the machine learning frameworks have in built support for GPUs. There is a need to provide the capabilities needed by data scientists such as GPU access from Kubernetes environments.

vSphere 7 brings GPU sharing capabilities with Bitfusion

With the release of vSphere 7 many a new feature with vSphere Bitfusion was introduced. vSphere Bitfusion provides the ability to share NVIDIA GPUs over the network. Modern applications quite often need access to GPUs and their massive compute capabilities for timely and efficient processing. There is a significant need for Kubernetes based developer environments to access GPUs. A combination of the VMware Tanzu platform and vSphere Bitfusion for GPU sharing over the network can help meet the infrastructure needs of modern data scientists.

Figure 3: Leveraging vSphere Bitfusion for GPU access over network

In part 2 we will look at vMLP (a Machine Learning Platform) and in part 3 we will bring all components together to build the “Data For Good” solution.