This is part 3 in a series of blog articles on the various use cases for machine learning applications on VMware Cloud on AWS and other VMware Cloud infrastructure. We talked about tabular data as a great target for ML apps in part 1. We also showed that the classic linear and decision tree models for ML exhibit higher levels of model explainability in part 2 and so those are a good target for deployment on VMware Cloud on AWS. In part 3 here, we look at the capability for exercising the various ML platforms and tools on the hybrid cloud, so you can choose where to train and separately deploy your models more flexibly.

What is the Hybrid Cloud?

Contents

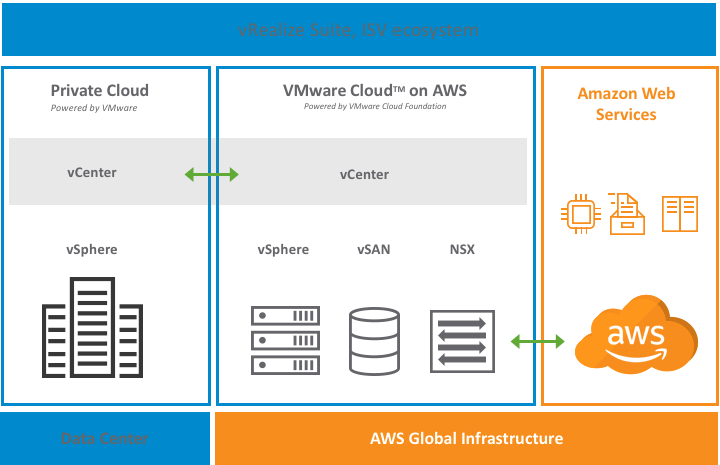

We think of the hybrid cloud at VMware as the combination of public cloud and private cloud services (i.e. on-premises services) to provide a unified operating platform. Ideally, we want our application workloads to operate in exactly the same way, and be managed in the same way, no matter where they are running on this combination. This brings uniformity of operation, ease of movement of workloads, scaling and application portability. We want the hybrid cloud to allow for workload-level mobility from private to public cloud and back again, at will. Components of one workload may also be running across the clouds. Your ETL functionality on source databases/data warehouses may be on-premises, while the ML training function may take place in the public cloud, based on the extracted data.

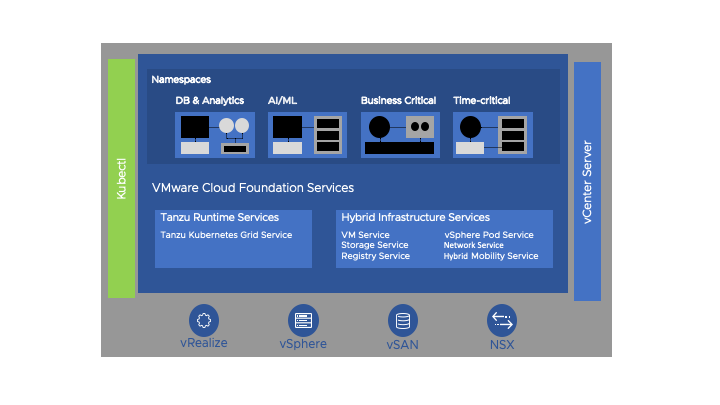

VMware’s full software-defined datacenter (SDDC) family of software, made up of the core vSphere ESXi hypervisor, the vSAN storage virtualization and virtualized networking in VMware NSX, all operate together under one platform called VMware Cloud Foundation (VCF). VCF is the core platform for both on-premises and the public cloud managed services, the first of which is named VMware Cloud on AWS. This environment is managed by a common set of tools, such as the vCenter Server and the vRealize suite. That full VCF stack operates on many thousands of bare metal servers today in customers’ on-premises data centers. The same VCF software runs on bare metal servers that are located in AWS and other cloud providers’ data centers, in the same way. We refer to the public cloud service offering, that is fully managed by VMware on behalf of a customer (setup, administration, patching, lifecycle, etc.,) as VMware Cloud on AWS. You can immediately see the uniformity of operation and simplicity that comes from this common platform on both private and public clouds, together.

That same managed cloud service, based on VCF, is available on other clouds too. It is important to note that VMware Cloud on AWS is not a VM-level emulation based on any underlying layer. Instead, the core ESXi hypervisor software within VCF is running on and controlling the cloud-based hardware directly, just as it does in on-premises deployments. It is often regarded by administrators as a cloud extension to your current VMware-driven data-center. Some customers think of VMware Cloud on AWS as a potential replacement for parts of their data centers today.

The key benefit of this from an application viewpoint, is that these two deployment platforms, on-premises and public cloud, look exactly the same both to operations people and to application deployment people. You can take a set of VMs running an ML application today on-premises on VCF and migrate them over, live, from there to VMware Cloud on AWS and back again, at will. You can treat the two environments, on-premises and public cloud as one entity to be managed centrally by your vCenter team, using tools they are already familiar with. There is no application re-factoring required here.

The Value of Hybrid Cloud to the Machine Learning Community

Why is this common hybrid cloud platform important for machine learning workloads, in particular? There are several reasons why this matters to the ML practitioner – i.e. the data scientist and data engineer. We look at the variety of ML development and deployment requirements first, below, and then the variety of tools and platforms that data scientists use to do their work.

The Different Requirements of the ML Environment

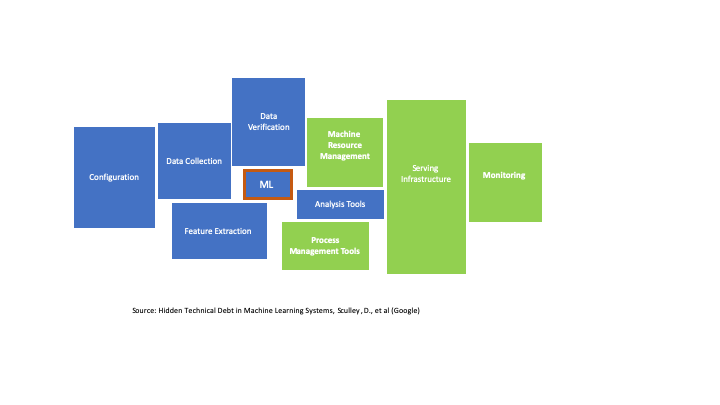

Choosing and tuning the core ML algorithm or model type are clearly at the heart of the data scientists’ work, indicated by the ML box at the center below – but as we know from the published work of Sculley et al., there are many more important considerations in the machine learning landscape than the parameters of the ML models themselves. There is considerable hidden technical debt in ML, leading to platform requirements. These surrounding concerns are pictured below, in fact dwarfing the importance of the core ML algorithm choices, seen at the center. The areas covered by the ML tools/platform vendors tend to be toward the left in blue. The areas in which we would apply the VMware hybrid cloud are shown in green to the right. However, VMware also plays a key role at the infrastructure level supporting the applications for data collection, feature extraction, configuration and ML model development of course. The key point is that no matter where you choose to perform different subsets of these functions, on premises or on the public cloud, the base infrastructure does not change with VCF.

The two general areas of focus shown above, (a) tools and platforms to support data collection, verification, feature extraction, system configuration and core ML design – along with (b) the serving infrastructure, machine resource management and monitoring, all work better together, by having all the tools and platforms running on a common cloud platform. VMware provides intrinsic value in the Resource Management, Serving Infrastructure and Monitoring areas. Part (a) is handled at the application level by the ML tools/platform vendors and we will say more about running these on VMware below.

You may want to train models in one location and deploy them for inference in another. You may choose public cloud for inference because it gives your model a global presence for applications that are widely dispersed. Training can be done on the private cloud and model deployment done on public cloud – or vice versa. Having that capability to choose where you do what, without the infrastructure getting in the way, is a very desirable situation for the data scientist. We want to reduce the variables that the data scientist has to work with to a minimum, especially at the infrastructure level. The data scientist should not have to worry about the health of their virtual machines or how long they can run them for, for example.

Some ML algorithms may need acceleration technology to speed them up at training time – others may not. Choose the environment where you have that acceleration available to you (such as GPUs deployed on-premises on vSphere, for example) for one part of the ML lifecycle, the training phase. Then use the cloud environment for the model test and deployment phases, for global reach of your trained models, for example.

Data Locality

The location of the data that will be fed into the ML training mechanism matters. This data is often bulky and we want to move it as little as possible. That big set of data will be used several times over in a training run, in an iterative way. It is best to execute your ML training close to the source of the data for that reason. As more data and databases move into the public cloud, you can do your training there. If data must stay on-premises for now, execute your ML training on it there. the resulting model can be moved in either direction after training is done.

The ML Vendor Platforms and the Hybrid Cloud

There is a wealth of application-level tools and platforms for machine learning users/developers in the broad ML ecosystem. That collection of platforms continues to grow at a rapid pace, with new platforms appearing regularly. VMware does not have a preference for one set of tools over another – the idea is to support as many ML tool platforms as are needed by users on vSphere. Organizations will make the best choice for their ML needs among this ecosystem. We see this collection of tools and platforms as breaking down into four outline categories:

-ETL and data transport tools for the data engineer mainly concerned with organizing and cleansing the data;

-Model creation, notebooks and testing tools for the code developer/data scientist;

-Automated ML tools for the citizen data scientist or business person;

-Machine learning operations (MLOps) platforms for controlled, versioned deployment of your trained models and governance of datasets and models

Many ML vendors cross the boundaries between these categories, so the distinction between them is not adhered to rigidly, but it allows us to summarize their functionality in a meaningful way.

VMware and its partner companies have put many of these tools through their paces in our labs to show their functionality when running on the VMware vSphere and VCF platforms. By proving to ourselves that they operate well on VCF, we then establish confidence that they operate on the VMware Cloud on AWS service equally.

Examples of the ML tools/platforms that have undergone testing on the VMware Cloud Foundation platform, along with links to the reference architecture documents that apply to each are:-

Datarobot

Domino Data Labs (reference architecture in progress)

H2O

Iguazio

KNIME

SAS

While the above independent software vendors represent a good cross-section of the development/validation and serving phases of the Machine Learning lifecycle, we see partner companies that also focus in on the production deployment of models. Examples here are

Algorithmia (who are testing their platforms on VMware vSphere 7 in the labs)

Convrg.io

Open Source ML Tools and Platforms

Many of the vendors we mentioned above also supply an open source version of their tooling. These are often Notebook-based as they frequently cater for the kind of data scientist who writes code and uses Jupyter or similar notebooks. Examples of this are H2O with their Flow environment, DataRobot’s tools and Databricks with their Community Edition. Several of these tools have been run on virtual machines on the VMware Cloud Foundation infrastructure – and so are also candidates for VMware Cloud on AWS.

One of the most common open source platforms that is used today for data engineering, ETL feature extraction and now full blown machine learning is Apache Spark. VMware has conducted several performance studies on Spark for ML with performance in mind and has shown that Spark-based ML crosses the boundary between on-premises vSphere and VMware Cloud on AWS very well. More details can be found at

High Performance Virtualized Spark Clusters on Kubernetes for Deep Learning

and in a comparison of Spark on vSphere on-premises versus Spark on VMware cloud on AWS for machine learning

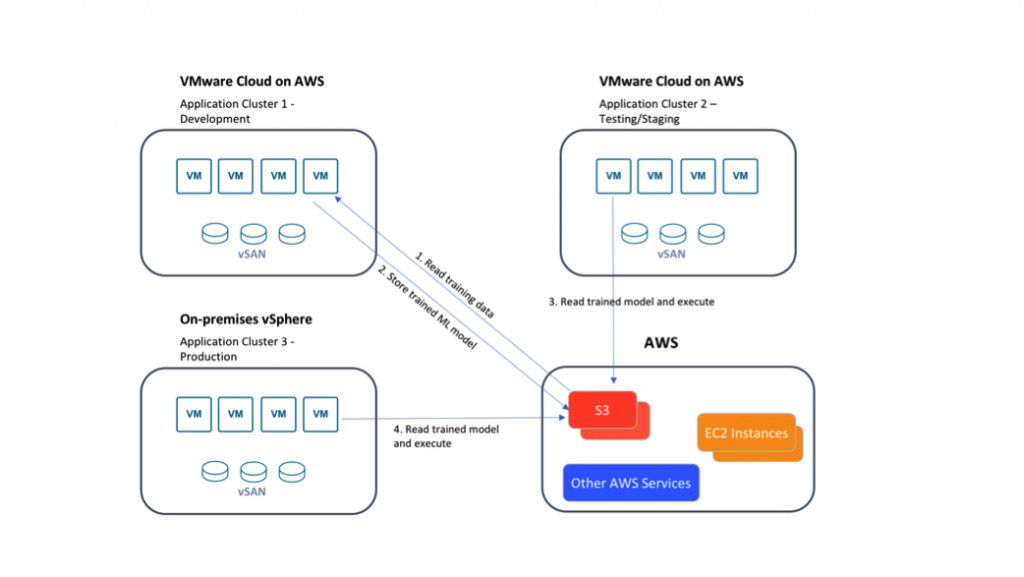

In these performance exercises, the differences between the two platforms were shown to be negligible. VMware personnel worked with the Spark technology to show that not only would it perform very well on-premises and on VMware Cloud on AWS, but that data could be shared between those two and with native AWS storage environments in an architecture that combined all three. This is shown below in the case of model development in one virtualized environment (application cluster 1), model testing in another (application cluster 2) and model deployment in a third platform within a hybrid cloud setup (application cluster 3) as shown below

This design is more fully described here and here.

Popular libraries for machine learning are those associated with Python, TensorFlow/Keras and Caffe. All of these have been used heavily in testing on VMware vSphere and are shown in multiple performance studies to behave very well on vSphere. You can learn more about those studies here

There are also VMware-created toolkits that are available in the open source communities, such as VMware Machine Learning Platform (vMLP) and the InstaML toolkits for re-enforcement learning.

Kubernetes as the Unifying Deployment Platform for ML Tools

From our experiences at VMware of working with the ML platform vendors, it is clear that container-based and Kubernetes-controlled deployments of these ML platforms are the key unifying themes across the ecosystem. Each ML platform vendor has developed an integration with Kubernetes of their own design, either as a Kubernetes operator (in the case of Confluent, with Kafka or example) or otherwise. Some of the vendors ship a flavor of open source Kubernetes that they have fully tested with, often those in the ML Ops area – while others are open to using Kubernetes from various sources, such as a Kubernetes that is supplied by the operating/serving infrastructure platform.

With the general availability of VMware vSphere 7 with Kubernetes and within it, Tanzu Kubernetes Grid Services for workload clusters, advanced vendors are engaged initially in porting their Kubernetes-managed products to VMware vSphere with Kubernetes and once done, they will then rely on the virtualization platform for Kubernetes functionality, rather than having to take care of that area themselves. We are already seeing interest in doing that from a subset of the ML Platform providers and we expect that count to grow rapidly. This will make life easier again for the data scientist and data engineering community. By having one common, open-source platform for deploying tools and also for deploying models in serving mode, the levels of variability between the private cloud and public clouds will be simplified by the Kubernetes management tier. VMware has worked on deploying the Confluent operator for Kubernetes as one example of this level playing field for deployments and shown how that will work here.

Conclusion

In this article, we saw the benefits of the hybrid cloud as a platform for machine learning training and deployment. The uniformity of the operating platform across on-premises vSphere/VCF and the VMware Cloud on AWS gives many benefits for the data science department – by reducing the number of variables that occur as our model moves through development, into deployment and becomes subject to version and change control. We see that ML tool vendors are testing their platforms and tools for ML on vSphere on-premises and in the public cloud.

Open source offerings make sense to many enterprises and these too are have undergone performance tests on vSphere and on VMware Cloud on AWS, showing very good performance in both cases. Finally we saw the importance of Kubernetes as a deployment platform for the tools, platforms and final trained model in production. In part 1 of this series we saw tabular data as an ideal target for ML on VMware Cloud on AWS and in part 2 we explored model explanation on the same platform. Together, these scenarios make a powerful case for VMware’s hybrid cloud as a basis for your machine learning work.

Call to Action

If your data scientist or machine learning practitioners at your organization are not familiar with VMware vSphere or VMware Cloud on AWS as a platform for their tooling for machine learning, you can use this series of articles to introduce them to these platforms. You can also evaluate VMware Cloud on AWS by taking a hands-on lab.

There are also in-depth technical articles on distributed machine learning, on the use of neural networks and hardware accelerators and high performance computing on VMware available at this site.