Part 1: The what, why and how of Serverless Offerings on VMware Cloud Director

Contents

Why Serverless?

Developers love ready-to-consume services that make their life easier. They allow them to focus on what they are supposed to do: develop software to solve business and real-world problems. Whether its deploying easy to use docker containers on Kubernetes, serverless options like Functions-as-a-Service for event-driven computing or ready-to-use platforms as a service; like databases or middleware – developers mostly prefer to handle as few of the underlying infrastructure components and complexities as possible and just not worry about it.

For service providers, offering these types of services can be new and challenging. Traditionally, they focused on providing superior Infrastructure-as-a-Service. These IaaS offerings are built on VMware Cloud Director and the VMware Cloud Provider platform as the core of their portfolio. But customers are increasingly looking for additional, higher abstraction types of services. They find them in the broad and fast-growing portfolios of hyperscalers like Amazon Web Services, Microsoft Azure and Google Cloud Platform. Equally, many customers prefer a local or regional provider they know and trust, to deploy, run and manage their critical workloads and custom apps.

In this blog series, you will learn how service providers can offer the best of both worlds to their customers: innovative developer services on their established, VMware Cloud Verified platform through the VMware Cloud Provider program. Thereby, they can broaden their portfolio, address new customer buying personas from the development and DevOps team and run more workloads for their customers.

High Level Solution Overview

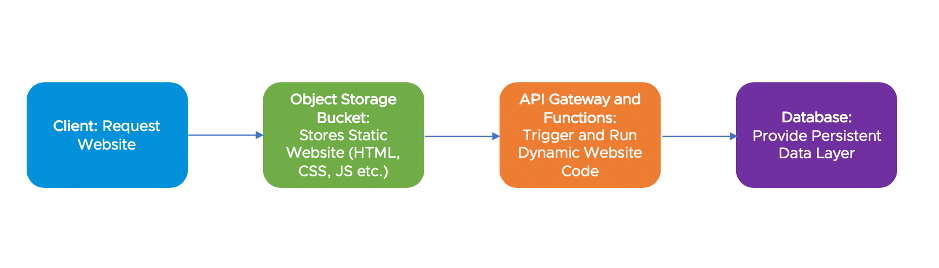

Let’s look at a common example of a serverless, cloud-native application that a developer would like to deploy: a serverless web application. On AWS, developers would store static website content like HTML, CSS or JavaScript in an S3 object storage bucket, that can be accessed natively via HTTP(s). Persistence would be achieved by storing data in an AWS Dynamo DB NoSQL database through an AWS Lambda serverless function. And these Lambda functions would get triggered by calling an API-Gateway Endpoint through the static JavaScript. We now have a fully functional web application without running and maintaining a single VM or server. So, in a nutshell, a developer needs four services available to build this very common architecture:

- Object Storage

- NoSQL Database Service

- Functions-as-a-Service

- API Gateway

Here is how this architecture looks from a high level:

Let’s explore the different options that service providers can use within the VMware Cloud Provider program. These enable providers to easily build, run and manage such a set of developer services for their customers.

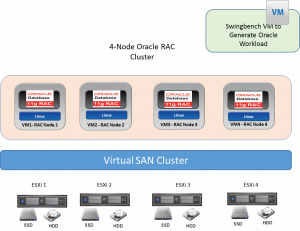

Behind every serverless option that a developer consumes, even in a hyperscale Cloud like AWS, there is of course a server running somewhere to provide the service. Serverless simply means that the consumer of the service, i.e. the developer, does not have to care about these servers. The complexities and components in the backend are abstracted away by the service and the developer consumes the resources in a serverless way.

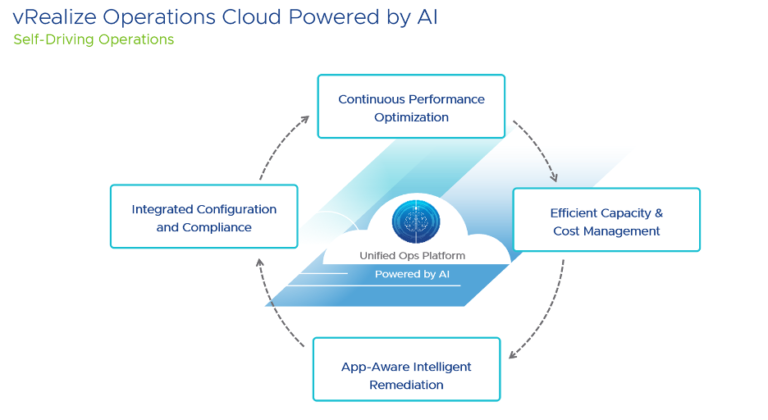

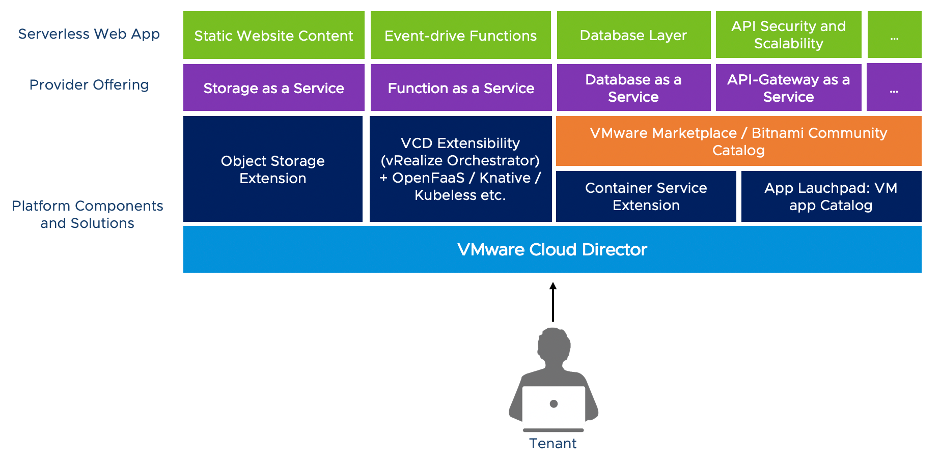

Because of that, the first thing providers need, still is a robust IaaS platform that allows them to provision and manage the services they want to offer. And VMware Cloud Director built on the VMware SDDC gives providers exactly this robust IaaS layer. But how can the provider build and offer the actual serverless options for the formerly described architecture?

VMware Cloud Provider Platform for Serverless

Let’s start with object storage, which can be easily provided through the VMware Cloud Director Object Storage Extension. This gives developers a ready to consume object storage on VCD, fully compatible with S3 which is the de-facto standard for Cloud object storage, to store all the static web site content accessible via HTTP. This was simple.

For the other needed services, we can leverage the Bitnami Community Catalog available through the VMware Managed Service Provider program. This allows the service provider to offer well-architected and secure applications and frameworks as a service. Bitnami provides access to more than 180 pre-packaged, tested and security-hardened open-source solutions. It allows to easily broaden the portfolio of services on top of the Infrastructure-as-a-Service offering. We can use MongoDB or Cassandra as NoSQL databases and Kong as an open-source API-Gateway solution for our serverless web app example. These are available as vApp Templates that can be published through the VCD catalog for self-service by tenant developers.

Some of the Bitnami catalog items come not (only) packaged as vApp Templates for the VCD catalog. But they are also available as Helm-charts, which make them easy to deploy in a containerized way on Kubernetes. The chances are that a developer will want to deploy additional components on containers anyway. So why not offer Kubernetes-as-a-Service to begin with? Providers can use the VMware Cloud Director Container Service Extension (CSE) for this. CSE lets service providers offer Kubernetes on VMware Cloud Director so that customers can easily deploy Containers on a managed Kubernetes cluster. This will allow providers to provision additional Bitnami components via Helm-charts. For example, MongoDB in a containerized environment or MinIO as an alternative, open-source S3 object storage solution.

Extending the Platform

A provider could also provision Kubeapps into the Kubernetes cluster to make these Helm-charts easy to consume and manage. Kubeapps is a community-supported application catalog for Helm-charts from Bitnami. It provides access to different repositories and makes Helm-packaged applications easy to deploy and manage for customers in self-service environments, or for providers in managed service and serverless environments.

We are still missing a key service: Functions-as-a-Service (FaaS). Providers can use the VCD extensibility framework with vRealize Orchestrator to build FaaS, using for example OpenFaaS or Knative. Or Kubeless, which is another community-supported Bitnami project. These options work on top of the Kubernetes-as-a-Service offering we just described with the VCD Container Service Extension.

Let’s put it all together:

Stay tuned for part 2 and 3 of this blog series where we will go into more detail. Part 2 covers the implementation architecture and developer experience using the described services. In the final part 3, we look at the managed services options and further considerations for providers.