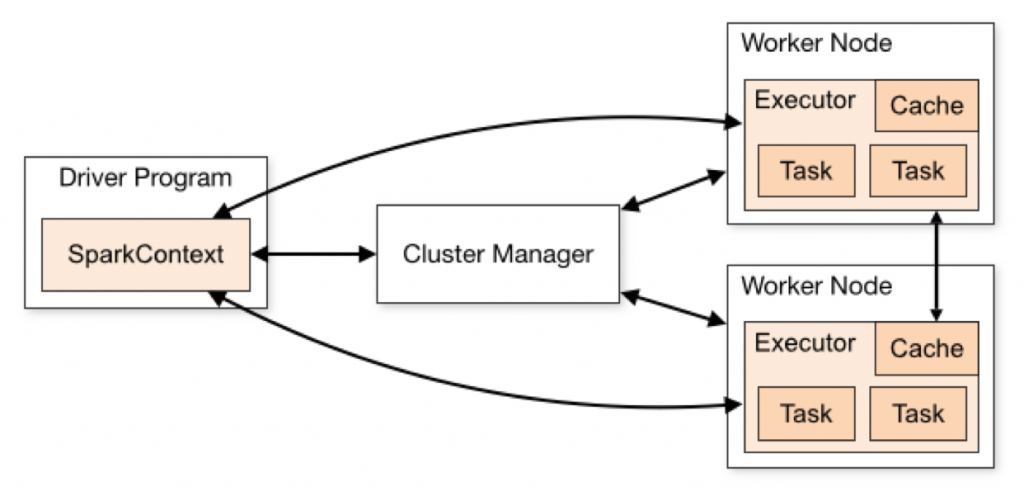

Apache Spark is a very popular application platform for scalable, parallel computation that can be configured to run either in standalone form, using its own Cluster Manager, or within a Hadoop/YARN context. A growing interest now is in the combination of Spark with Kubernetes, the latter acting as a job scheduler and resource manager, and replacing the traditional YARN resource manager mechanism that has been used up to now to control Spark’s execution within Hadoop. This new blog article focuses on the Spark with Kubernetes combination to characterize its performance for machine learning workloads.

The Spark core Java processes (Driver, Worker, Executor) can run either in containers or as non-containerized operating system processes. All of the above have been shown to execute well on VMware vSphere, whether under the control of Kubernetes or not. Kubernetes here plays the role of the pluggable Cluster Manager.

This recent performance testing work, done by Dave Jaffe, Staff Engineer on the Performance Engineering team at VMware, shows a comparison of Spark cluster performance under load when executing under Kubernetes control versus Spark executing outside of Kubernetes control. Without Kubernetes present, standalone Spark uses the built-in cluster manager in Apache Spark. The Kubernetes platform used here was provided by Essential PKS from VMware. The full technical details are given in this paper.

A well-known machine learning workload, ResNet50, was used to drive load through the Spark platform in both deployment cases. The BigDL framework from Intel was used to drive this workload.The results of the performance tests show that the difference between the two forms of deploying Spark is minimal.

Spark deployed with Kubernetes, Spark standalone and Spark within Hadoop are all viable application platforms to deploy on VMware vSphere, as has been shown in this and previous performance studies. They each have their own characteristics and the industry is innovating mainly in the Spark with Kubernetes area at this time.