This article describes the live migration (vMotion) of a virtual machine that has a machine learning (ML) training exercise running in it from one host physical server to another, without interruption. If you are already familiar with VMware, then a vMotion like this will be very well known to you, as it is vital to IT operations in most enterprises today. If you are a data scientist or a machine learning practitioner, you may be new to VMware Cloud on AWS and to the vMotion functionality. We give you a brief introduction to these technologies below. vMotion is available (and extremely popular) on on-premises VMware vSphere as well as on VMware Cloud on AWS. It can be used to move VMs between those two environments also, if the appropriate network connections are in place.

This article complements two earlier posts (1) on the benefits of VMware for H2O tools and (2) on the deployment of a trained model from H2O to a Kubernetes-managed cluster.

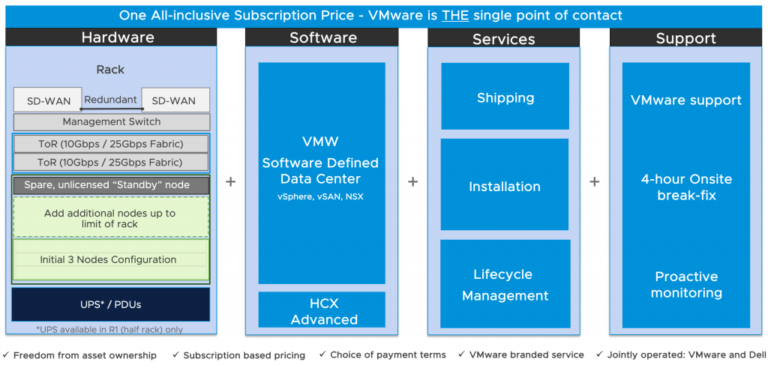

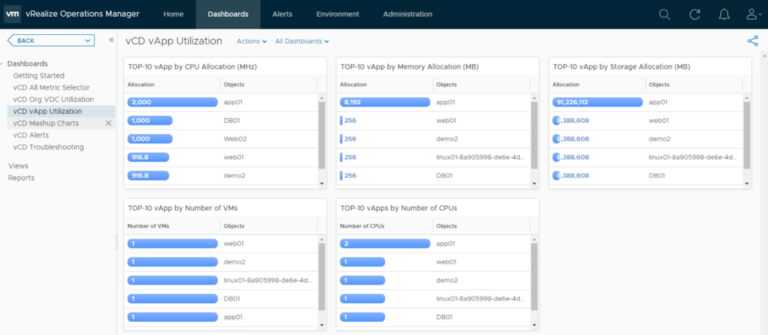

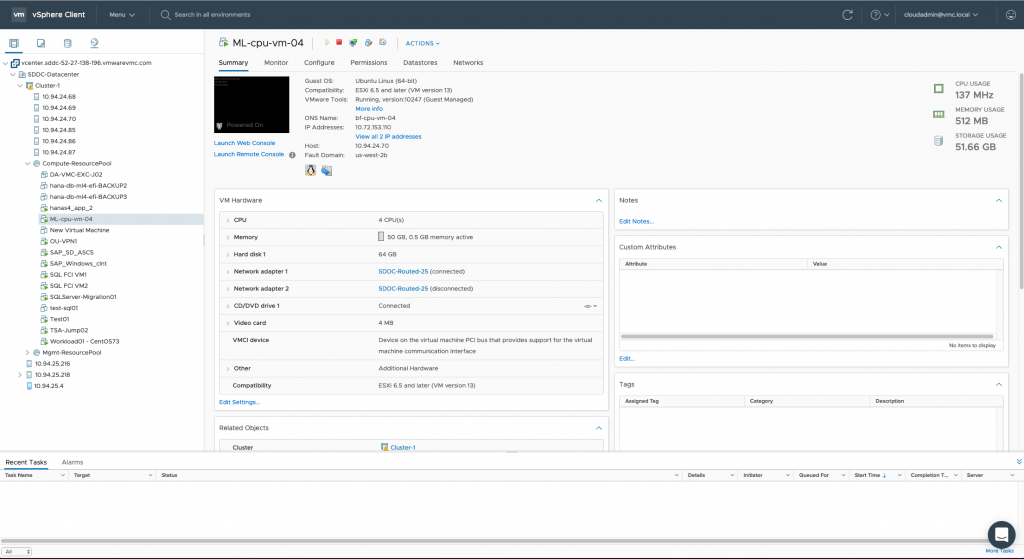

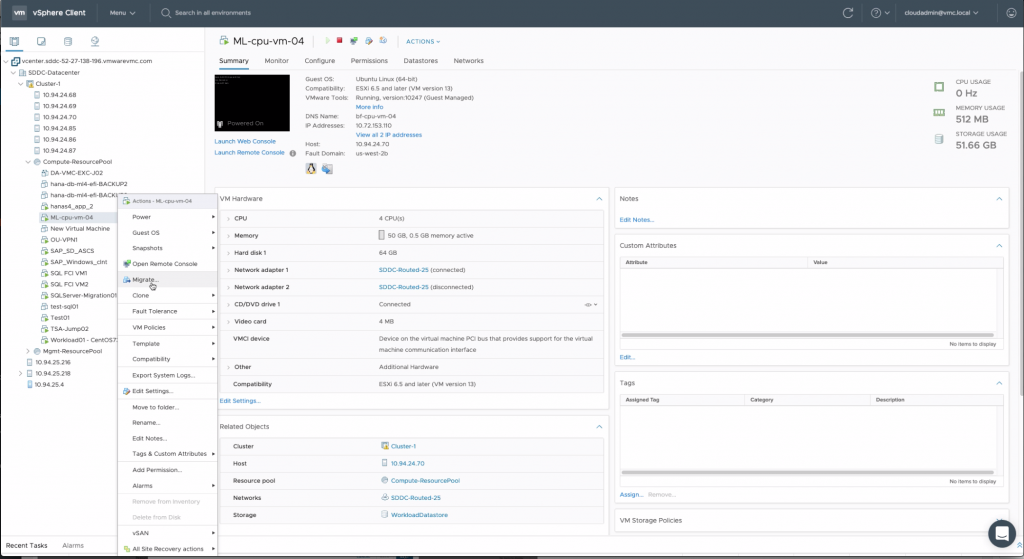

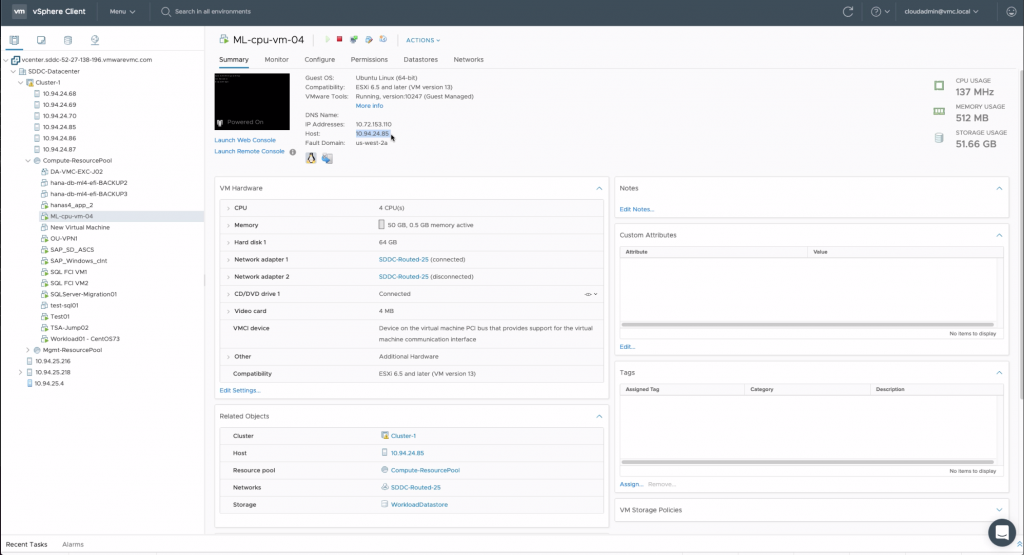

VMware Cloud on AWS is a managed service from VMware in the public cloud. The full VMware software stack (the “Software Defined Data Center” or SDDC) is deployed by VMware onto bare metal servers that are located at AWS sites and subsequently managed on the customer’s behalf by VMware. You can see an SDDC Datacenter deployed on several hosts above. This provides an identical experience to the end user of VMware in the cloud as they would have on their on-premises deployment of the SDDC stack. The components of that stack, the ESXi hypervisor, the vSAN storage and NSX networking are all included and configured in VMware Cloud on AWS SDDCs, the unit of deployment. To that end, what a system administrator experiences with VMware Cloud on AWS is the familiar vSphere Client interface to management functions as we see it above.

We focus here on a virtual machine named “ML-cpu-vm-04” in the left navigation pane of the vSphere Client interface. This VM is running the H2O Driverless AI tool for a data scientist. This VM will be migrated live from one host server to another while the H2O tool runs a training experiment on a dataset in it. The server hosts for the various VMs are shown ordered by their IP addresses in the top left side of that pane (e.g. 10.94.74.70 is one physical host). We note that ML-cpu-vm-04 is currently running on the host server with IP Address 10.94.24.70 in the center pane. That host server is located in a Fault Domain (which maps to an availability zone) named “us-west-2b” as seen beneath its IP address in the center. We can have as many host servers in an SDDC as we wish to; here we happen to have nine host servers in our SDDC.

The vMotion technology, built into VMware vSphere, has been a core feature since the very early days of vSphere. Its purpose is to move VMs and their workloads, without interrupting them, from one host server to another – for different reasons. It is used for load balancing purposes across servers under the covers of the Distributed Resource Scheduler (DRS) in vSphere. It can also be used to move a VM away from a server to another server, should hardware maintenance be required to be done to the former. This can be done manually or in a scripted fashion. We show the manual functionality here. This vMotion feature is important for the data scientist because they do not want a long-lived training experiment, running in a tool such as H2O’s Driverless AI, TensorFlow or PyTorch to be interrupted by such a maintenance event. They want their ML experiment(s) to continue while the movement of the VM is taking place, without interruption. Such live migration functionality is a core part of vSphere. We have the ability to move multiple workloads at once – by executing vMotion in parallel on several VMs at a time, if we need to. We can also use vMotion to move a VM from one Fault Domain or AWS availability zone to another, as will see.

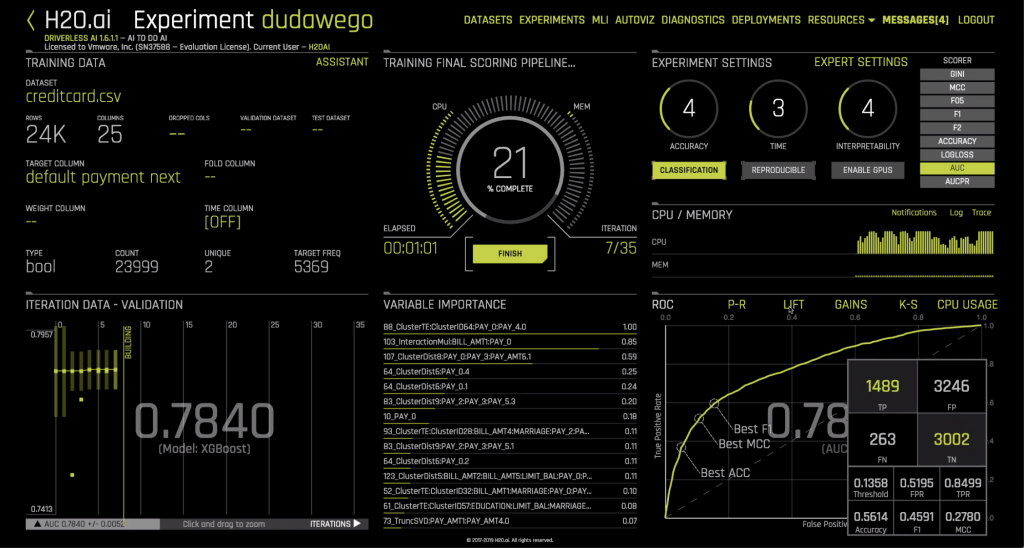

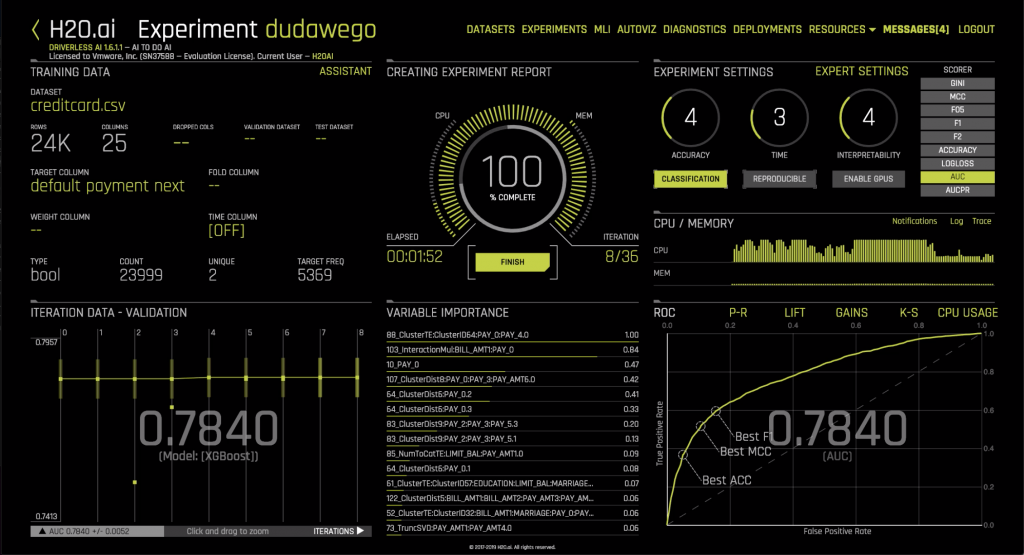

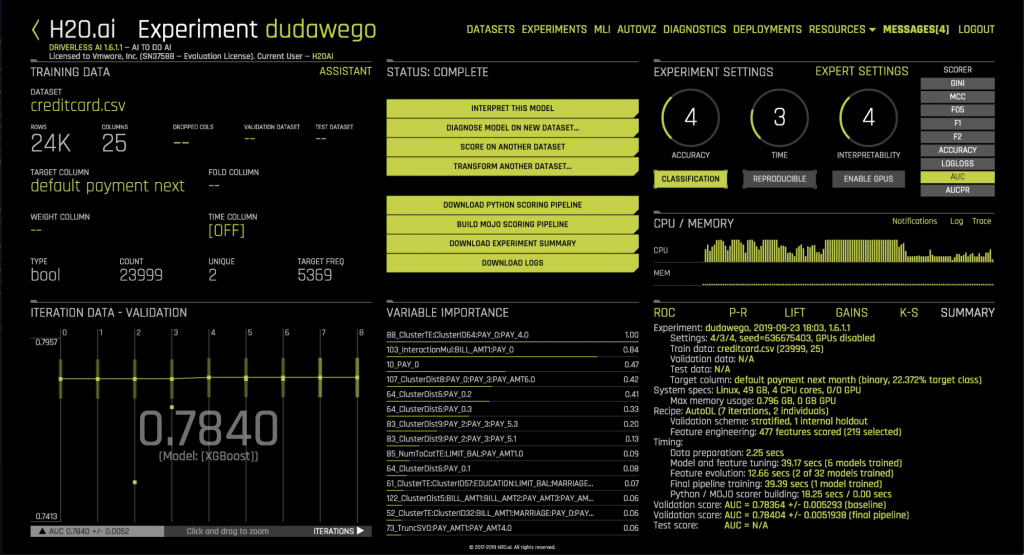

Here is the H2O Driverless AI tool executing the current training exercise on a set of training data in that virtual machine. We want to see this training experiment complete safely, although the VM it is running on is in motion, under the covers.

Now we proceed to migrate the VM to a different host server in the same SDDC. There is another host server that we know is underutilized that we can move it to. What we are showing here is the manual way to do vMotion, for explanatory purposes but this can be automated also – as is done in DRS, which is described in detail in the VMware documentation. All we need to do is right click on the VM’s icon and choose “Migrate”, and then choose the details of the migration.

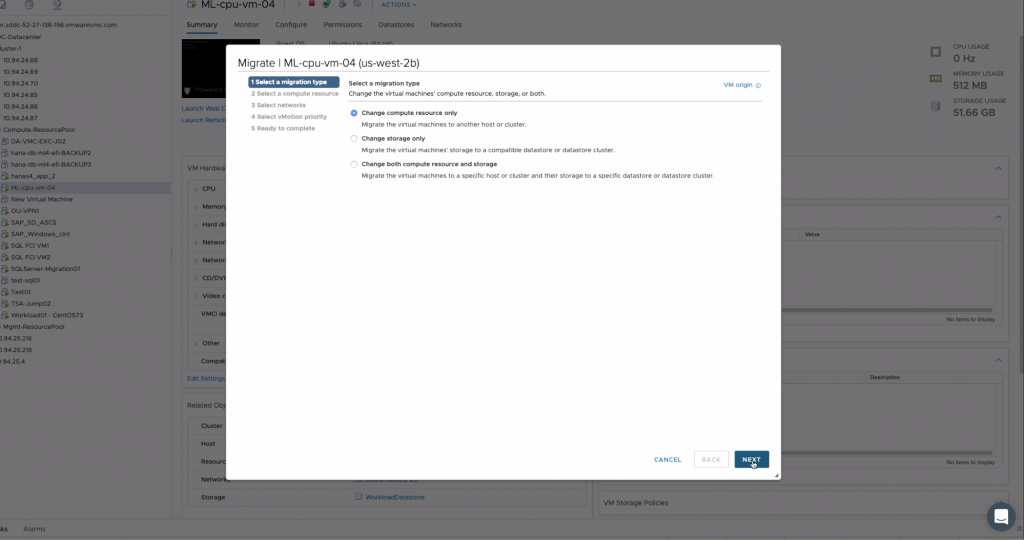

For this example, we chose to move the compute resources of the VM. We could also move the storage for the VM also, if we needed to.

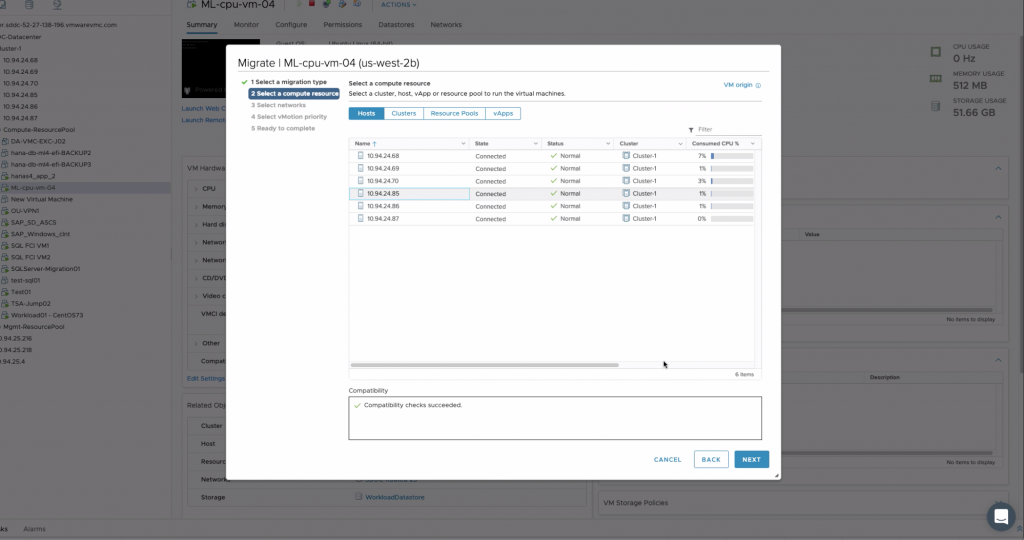

We can choose the host server that the VM will be moved to in the following dialog. We chose the “10.94.24.85” host server based on its low utilization or its Fault Domain if need be.

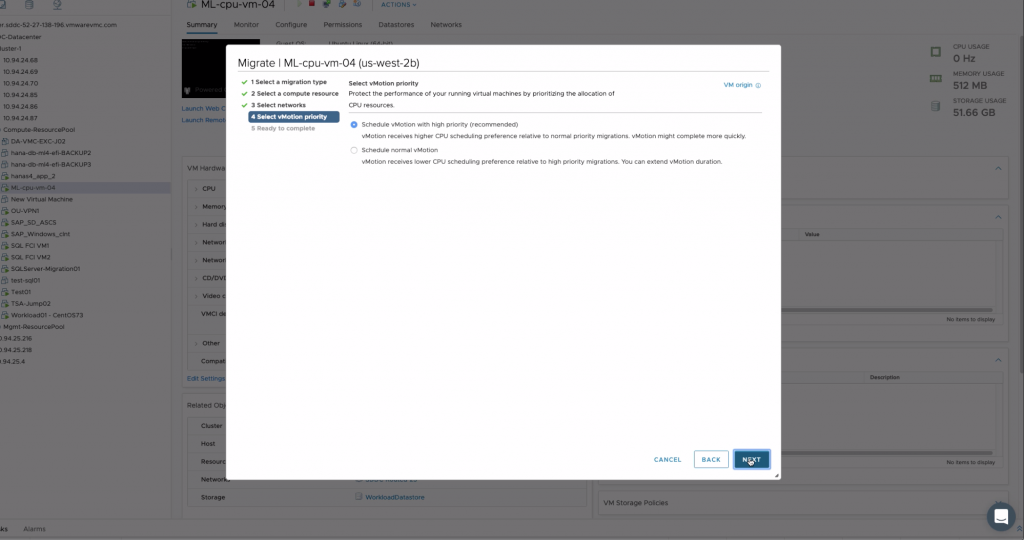

We are asked to choose the priority for this vMotion – we decide on high priority.

Once this process completes, we see the VM now located on the new host server, with IP address 10.94.24.85 as we see below.

During the vMotion migration, the training experiment in the H2O tool continued to run, without interruption or any performance impact. Notice that the Fault Domain in which the new host lives is a different one to the original host’s Fault Domain. These fault domains in VMware Cloud on AWS reside in separate AWS Availability Zones.

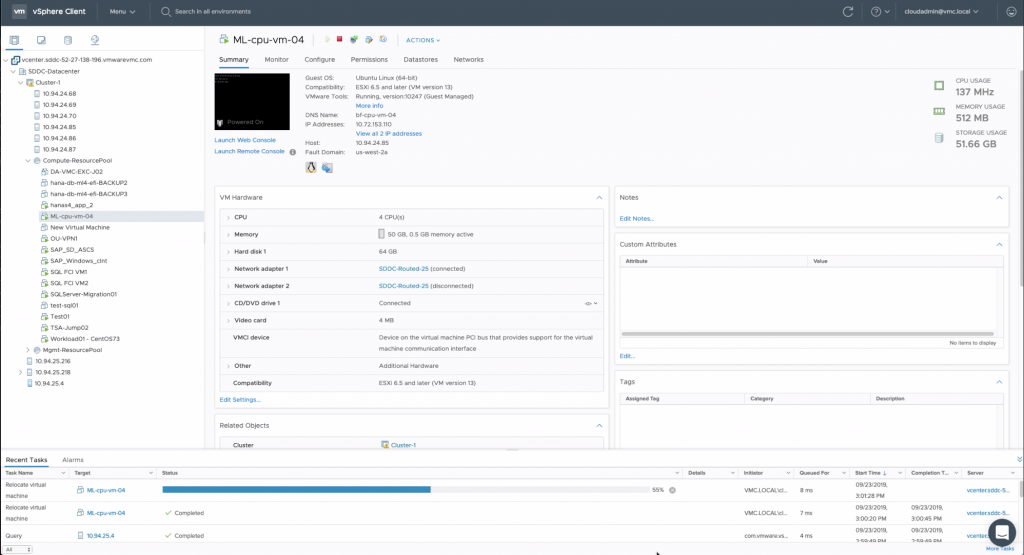

For completeness, we ran the training experiment again, and did a vMotion in the reverse direction (as may be done, once a hardware fix is done, for example). Here is the second vMotion back to the source host server in progress.

At the end of these vMotion actions, the ML experiment concluded successfully.

The data scientist can now decide to proceed with the production deployment of their trained scorer pipeline as described in the articles on H2O in a Spark runtime context or a REST and Kubernetes one or they may choose to continue experimenting with the model until it is further refined and ready for deployment. Multiple data scientists can work in parallel in this way on separate virtual machines running on the same cluster, with separate versions of their ML tool, or separate training datasets, customized for their own use. This facilitates the experimentation safety that the data scientist and data engineer require to do their job well.

Conclusion

In this article, we saw the H2O Driverless AI tool operating in virtual machines on vSphere. We showed a VM that is on the move from one host server to another, where the hosts are in different AZs. The physical host servers are managed using the VMware Software Defined Data Center stack of software that is the controlling software for VMware Cloud on AWS. We learned that VMware Cloud on AWS is a managed service on bare metal servers, available at multiple AWS sites, that is managed by VMware on a customer’s behalf. We saw a virtual machine running ML training being migrated using vMotion twice, so as to evacuate it from one host to another during a hardware maintenance event, for example, and return it to the origin once that hardware-level work is done. The ML training processes continued in the VM during those migrations. This whole VM migration scenario works in exactly the same way when VMs are hosted on on-premises vSphere. vMotion is used extensively on vSphere for load balancing in DRS and in other situations. This live vMotion process can also be used to migrate VMs across the WAN from on-premises vSphere to VMware Cloud on AWS, or the reverse, provided the correct network connections are in place. You can find more detail on this workload migration area here

If you would like to learn more about VMware Cloud on AWS, you can take a lightning lab to explore it here.

You can view a video of the whole vMotion process for the ML training as described above here