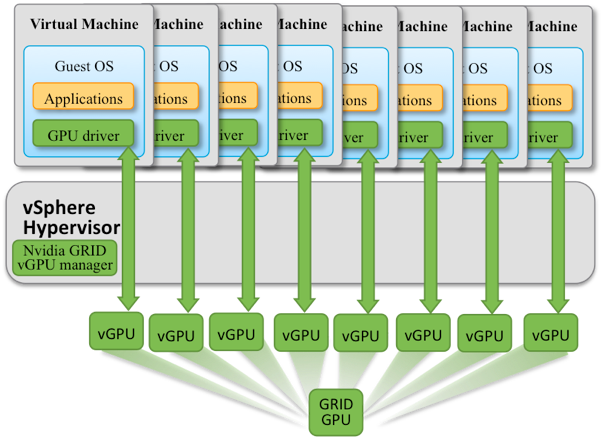

This article forms a short introduction to a more detailed description of the sharing of GPUs using NVIDIA GRID, written by members of our Performance Engineering team at VMware: Lan Vu, Uday Kurkure and Hari Sivaraman.

Data scientists may use GPUs on vSphere that are dedicated to one virtual machine only for their modeling work, if they need to. Certain machine learning workloads may well require that dedicated approach and it has been shown to be highly performant in previous articles. However, there are also many ML workloads and user types that do not use a dedicated GPU continuously to its maximum capacity. This presents an opportunity for shared use of a physical GPU by more than one virtual machine/user. This article explores the performance of a shared-GPU setup like this, supported by the NVIDIA GRID software product on vSphere. It presents performance test results that show that sharing GPUs is a feasible approach. The other technical reasons for sharing a GPU among multiple VMs are also described here. The article also gives best practices for determining how the sharing of a GPU may be done. More information on setting up the NVIDIA GRID software for VMware vSphere is available here.