Introduction

Contents

Even though Docker is the most prominent container registry and platform, it was primarily designed for Microservices and not for High Performance Computing (HPC). Singularity is the container solution designed from the ground up for Machine Learning , Compute Driven Analytics, and Data Science workloads commonly found in both HPC and Enterprise Performance Computing (EPC) environments. Singularity container is encapsulated in a single file making it highly portable and secure. Singularity is an open source container engine that is preferred for HPC workloads and has more than a million containers runs per day with a large specialized user base.

Figure 1: Singularity Containers Singularity Home

There is an increasing need for Machine Learning applications to leverage GPUs as a mechanism for speeding up the processing of large computations. Traditionally GPUs have been dedicated to users, leading to the lack of flexibility and to reduced utilization. Container solutions do not have any inbuilt resource allocation and sharing mechanisms for GPUs within the host OS. Dedicated GPUs result in low utilization as one or more GPUs are mapped to a single user.

Virtualization, which is a proven way of facilitating sharing of hardware resources, can also be leveraged for sharing GPUs. Combining containers with vSphere virtual machines can bring together the best of both worlds:

- vSphere virtual machines with NVIDIA Grid or Bitfusion can get whole or partial GPU allocations

- Containers are a great packaging mechanism for applications

- By enclosing one container per virtual machine a container can have access to portions of a GPU

- Machine and Deep Learning applications & platforms can be packaged and distributed effectively as a container inside a virtual machine

In this study we will look at the performance impact of sharing GPUs.

Sharing NVIDIA GPUs in VMware Virtualized Environments

NVIDIA GPUs can be currently shared in two different ways in VMware virtual environments:

- NVIDIA GRID vGPU

NVIDIA virtual GPU provides the capability for organizations to virtualize GPU workloads in modern business applications. NVIDIA GRID software helps share the power of GPUs across multiple Virtual Machines. NVIDIA Grid with GPUs can be used for simultaneous access by multiple deep learning applications and users. Note that while GPU memory is partitioned to create vGPUs, each vGPU is given time-sliced access to all of a GPU’s compute resources. This allows for better utilization of GPU resources in virtualized environments.

- Bitfusion

Bitfusion on VMware vSphere provides the capability to share partial, full or multiple GPUs. Bitfusion on VMware vSphere makes GPUs a first-class resource that can be abstracted, partitioned, automated and shared much like traditional compute resources. GPU accelerators can be partitioned into multiple virtual GPUs of any size and accessed remotely by VMs, over the network. With Bitfusion, GPU accelerators are now part of a common infrastructure resource pool and available for use by any VM in a vSphere based environment.

In an earlier study, we looked at how Bitfusion could be leveraged to run machine learning applications with GPUs. In this study, we extended this sharing concept to Singularity HPC containers and the throughput benefits of sharing containers.

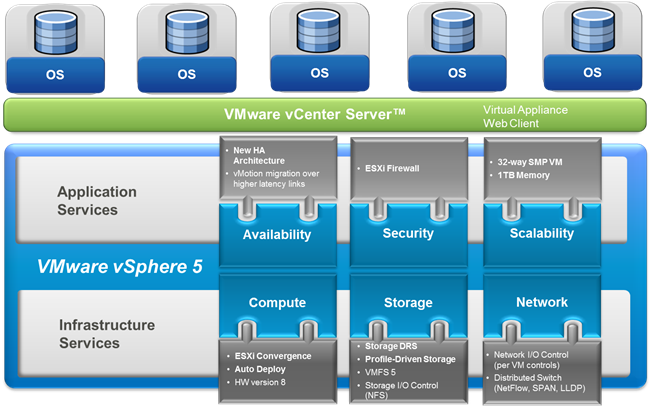

Infrastructure Components for Proof of Concept:

The hardware components of the proof of concept are shown below. A cluster of 4 X Dell R730 servers served as the GPU cluster with passthrough enabled. Client virtual machines are housed in a generic vSphere cluster of 4 X Dell R630 servers, backed by a Pure M50 based Fibre Channel storage for VMFS and Extreme Network 10 Gbps networking. All data at the guest level is stored in NFS storage hosted on a Pure Storage FlashBlade System.

Table 1: Hardware components for POC

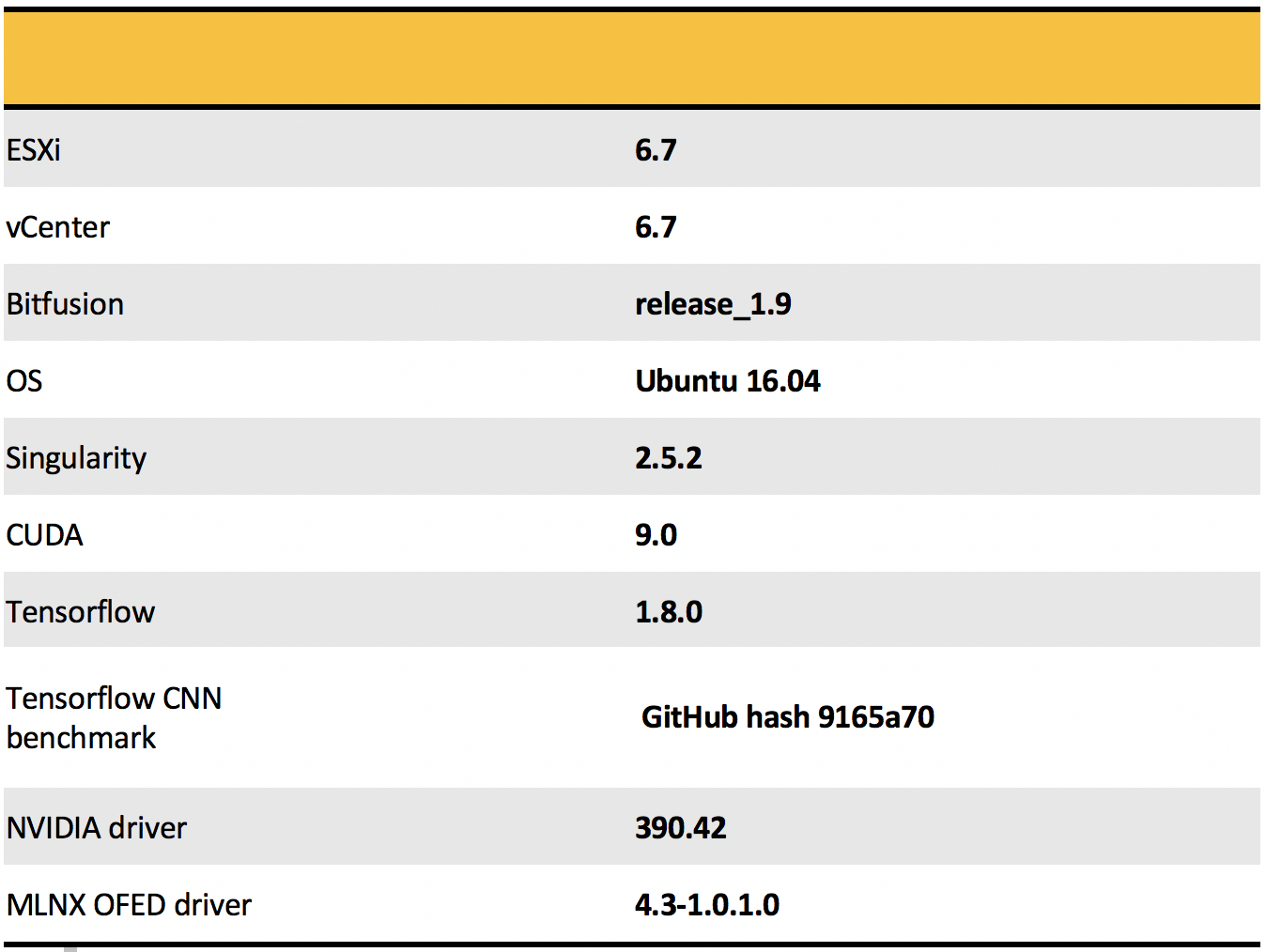

The vSphere version and other software components of the solution are shown below. The guest OS was Ubuntu and the version of Singularity used was 2.5.2. Bitfusion was used as the GPU sharing mechanism.

Table 2: Software Components used in POC

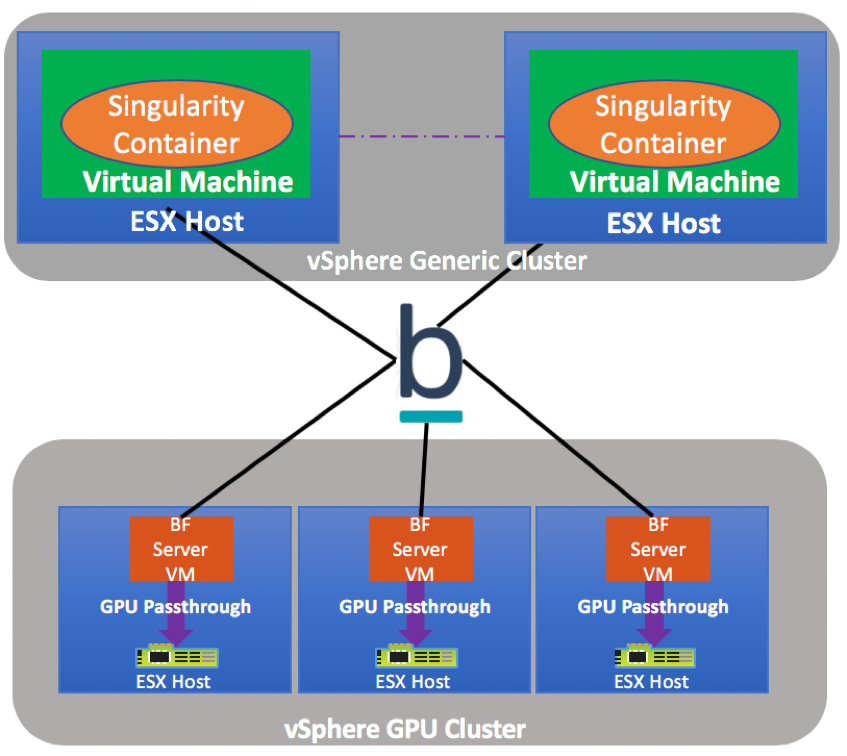

Logical Architecture

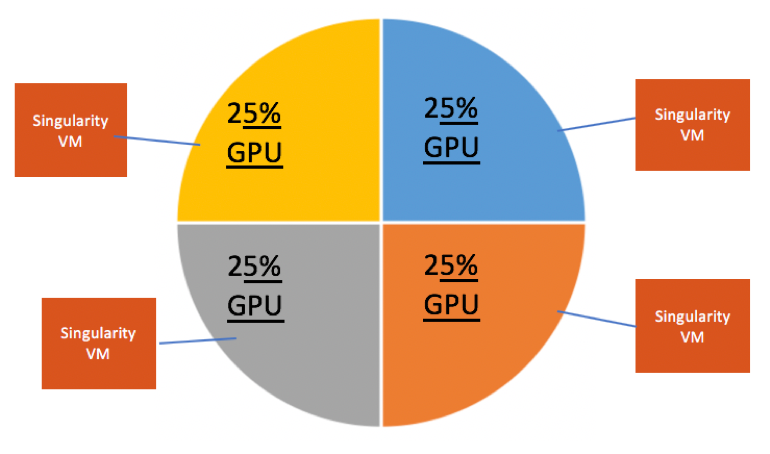

All GPU nodes were aggregated in a virtualized GPU cluster. Each GPU node had a single NVIDIA Tesla P100 GPU card attached via pass-through mode to a Bitfusion server virtual machine. The client virtual machines share access to the GPU resources via the 10 GBPS network. There is one Singularity Container per VM. The containers leverage partial or full GPUs allocated to the virtual machine. The Singularity Containers package with TensorFlow and the machine learning tools used in the proof of concept. Bitfusion is used for GPU sharing between the containers enclosed inside dedicated virtual machines.

Figure 2: Logical Schematic showing POC components

Building a Tensorflow container with GPU capability:

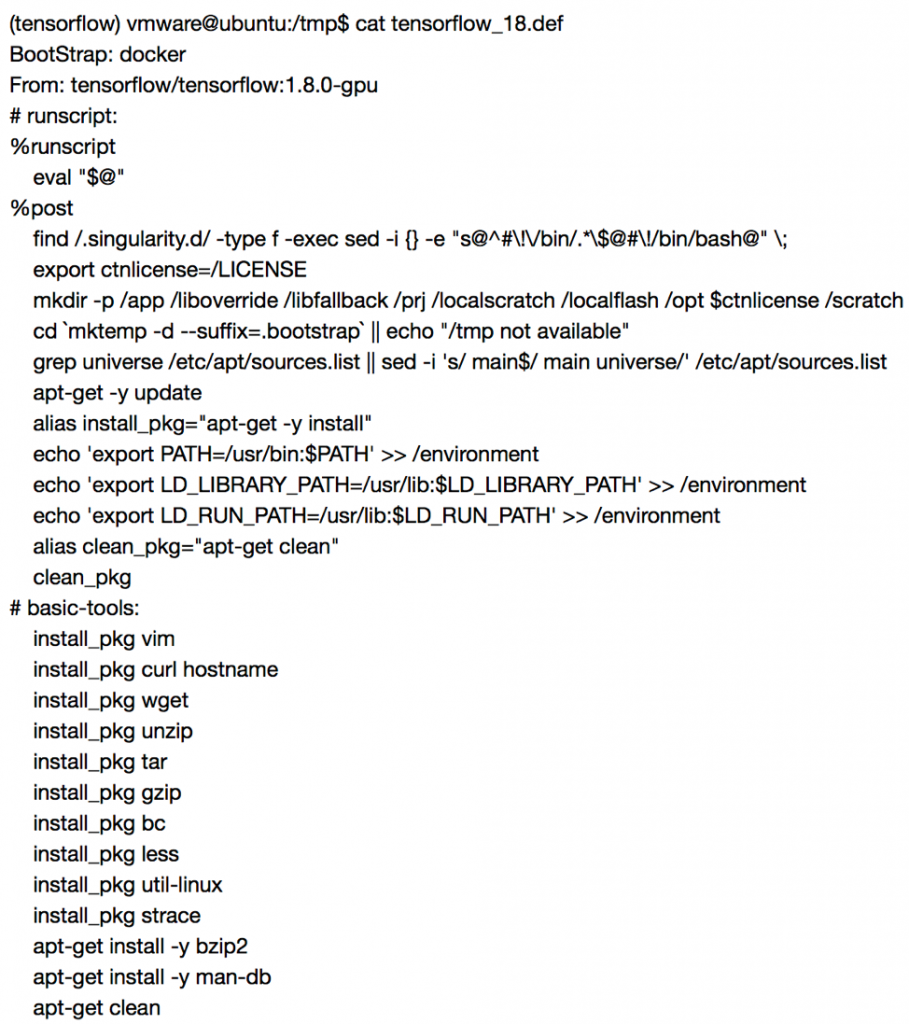

The big differentiating mechanism for containers is the ability to quickly build working application environments on the fly and its portability. In the following section, we show an example tensorflow definition file for the container. This definition file is then used to build a container image, which is then instantiated. The container image is portable and can be moved or copied to other host machines and executed without making any changes.

Container Definition File:

tensorflow_18.def

Figure 3: Screenshot showing TensorFlow container definition file

Build Container image from definition filed and start instance:

- sudo /opt/singularity/bin/singularity build –sandbox tensorflow_18.simg tensorflow_18.def

- /opt/singularity/bin/singularity exec -B /ml_data/nzhang:/mnt –nv tensorflow_18.simg /bin/bash

Testing Methodology

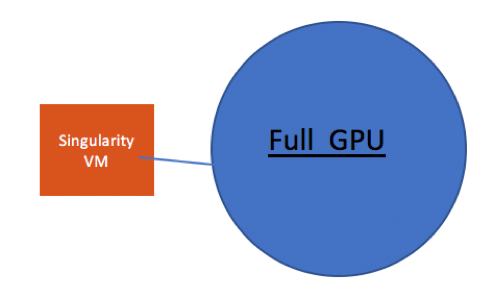

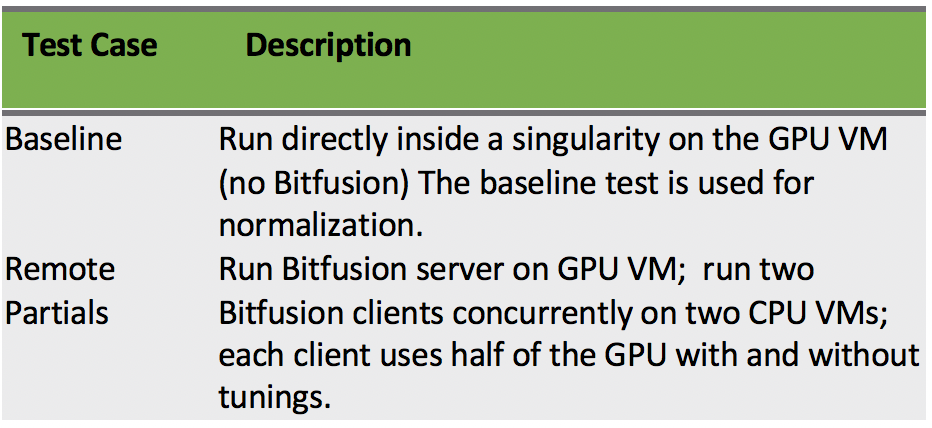

In this study, we have carried out some testing to show the results of running machine learning workloads directly in a container assigned to a full GPU and four containers assigned a quarter of a GPU each with Bitfusion:

- Baseline tests are run in a Singularity container running inside a virtual machine on the same physical host as the Bitfusion server with the GPU attached. This is the baseline case, as it is the best case scenario with no Bitfusion remote networking related overhead.

Figure 4: Baseline GPU configuration

- In this partial GPU case, the client VM with a Singularity container are on different ESX hosts from the server VM and are connected via 10Gb/s Ethernet

Figure 5: Shared GPU Configuration

The two cases are summarized in the table below.

Table 3: Bitfusion Test Cases

Benchmark: tf_cnn_benchmarks suite (TensorFlow)

The testing was done with tf_cnn_benchmarks, one of Tensorflow benchmark suites. This benchmark suite is designed for performance, since its models utilize the strategies employed in the Tensorflow Performance Guide. It contains implementations of several popular convolutional models, including Inception3, Resnet50 & Alexnet.

Results:

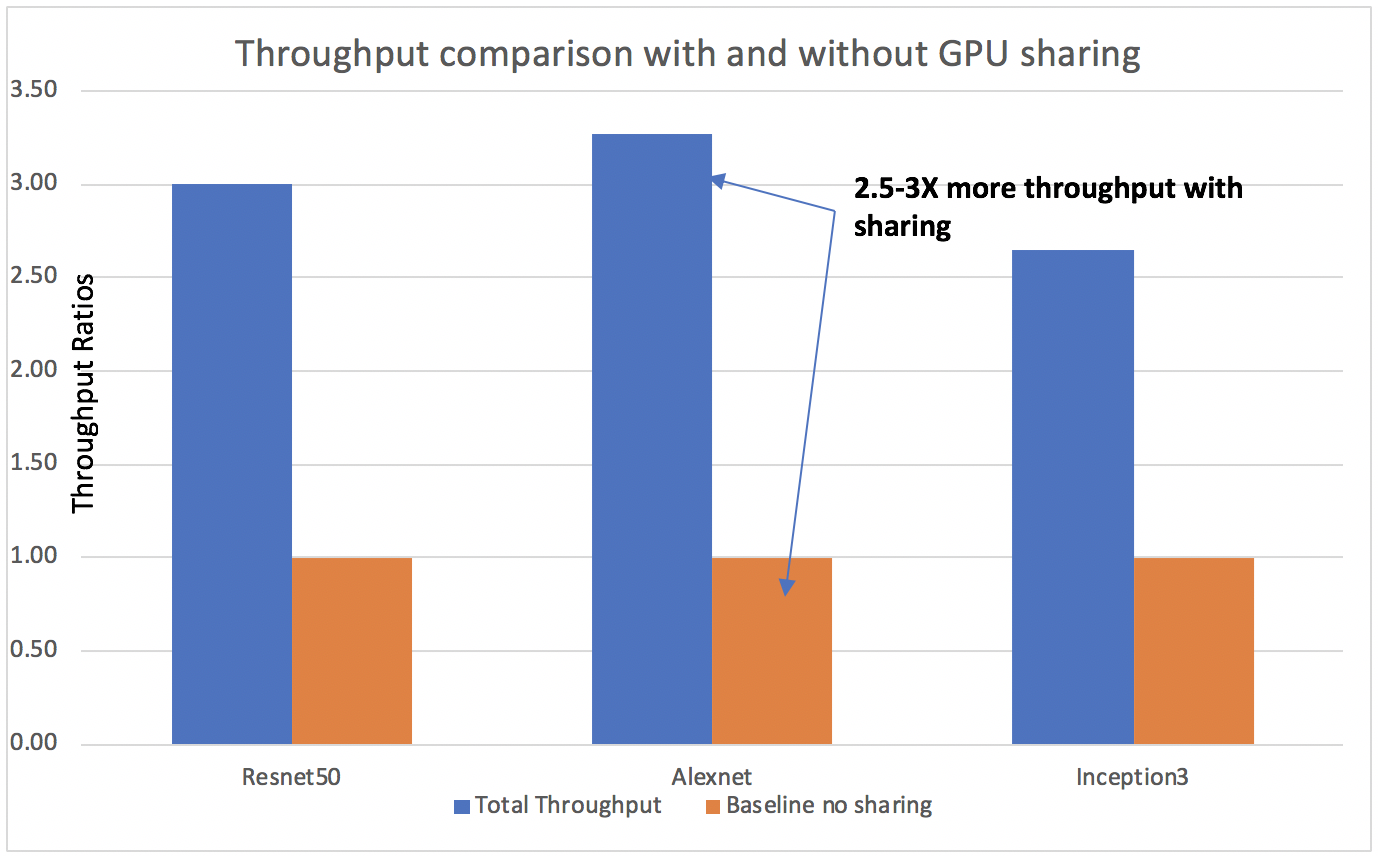

The baseline tests were run on a single virtual machine with full access to the GPU. The models were run in sequence multiple times to get the baseline images processed per second.

Figure 6: Throughput comparison for different models

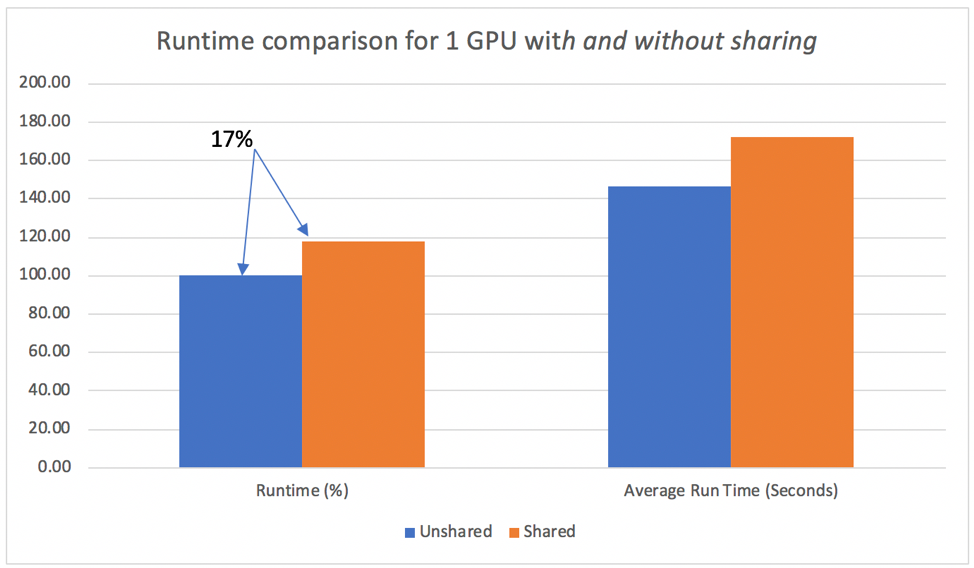

For the sharing use case, the model jobs run randomly across all four client containers in parallel. The baseline tests were run on a single virtual machine with full access to the GPU. The four Singularity container VMs were allocated 25% of the GPU resources. The tests were repeated five times allowing for some burn in runs. The throughput measured in images per second and the time of completion was compared between the shared use case and the baseline.

Figure 7: Job completion time comparison with and without GPU sharing

Conclusion:

This testing has shown that multiple machine learning workloads can run simultaneously on the same GPU. The results have shown that GPU sharing on vSphere can provide three times the image processing throughput. The time consumed for the four simultaneous jobs is only 17% longer than a single job using the full GPU. Sharing GPUs provides the potential for significant HW cost savings. Singularity Containers in combination with vSphere are a great combination for Machine Learning. vSphere provides the capability to share partial GPUs, while Singularity containers can simplify the application packaging and deployment of complex machine learning environments.