SUSE Virtualization (also known as Harvester) offers versatile networking options for VMs to use.

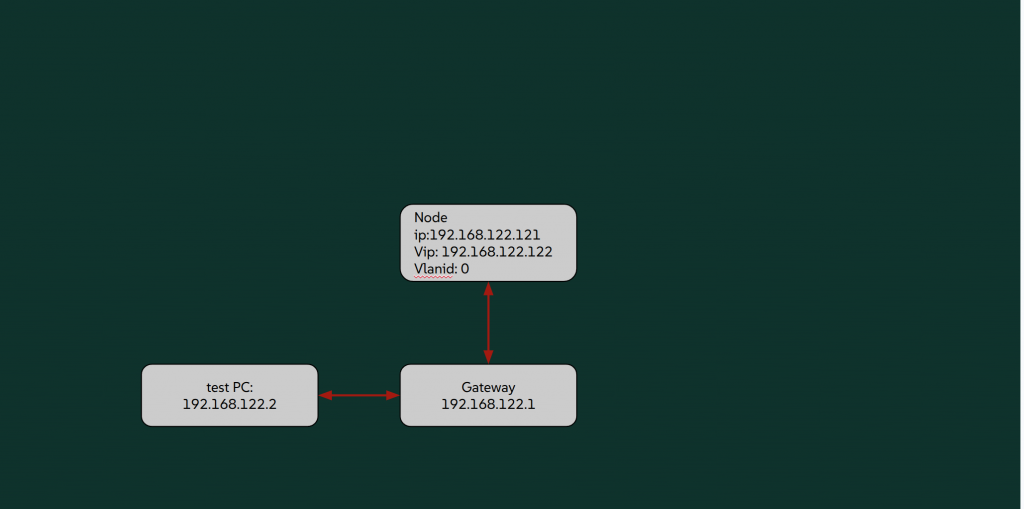

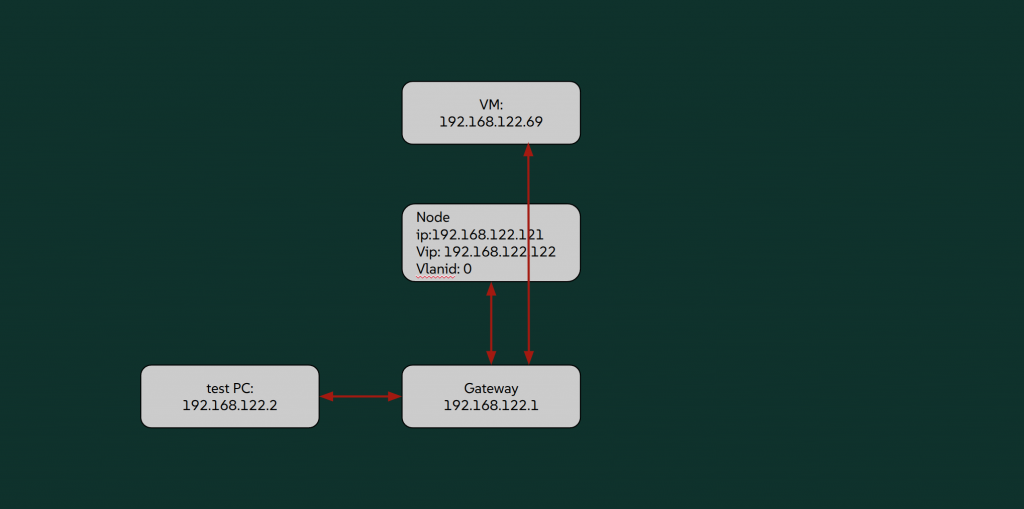

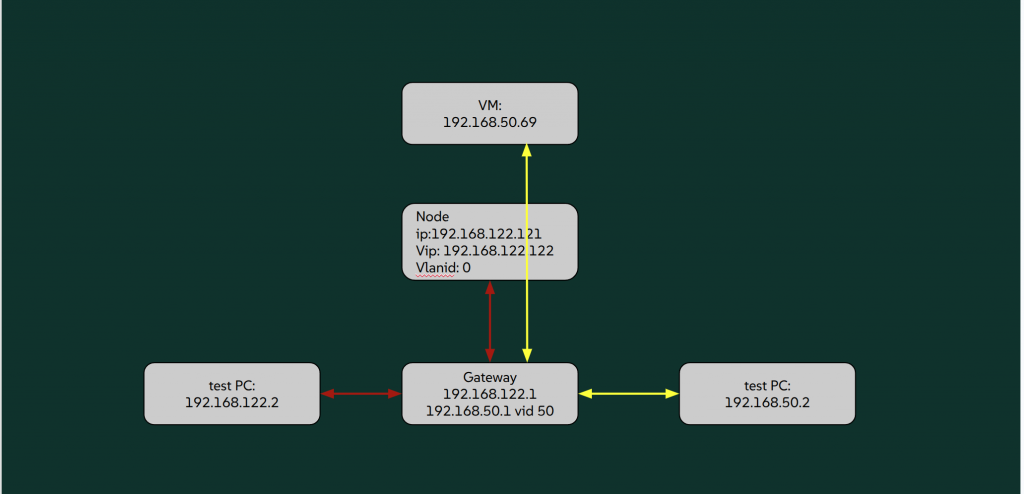

In this blog, an example SUSE Virtualization cluster is installed with the following infrastructure network setting:

install:

mode: create

managementinterface:

interfaces:

- name: ens3

hwaddr: ...

method: dhcp

ip: ""

vlanid: 0

vip: 192.168.122.122

viphwaddr: ""

vipmode: static

clusterdns: ""

clusterpodcidr: ""

clusterservicecidr: ""

# the content is saved in the file `/oem/harvester.config` on the intial node of the cluster

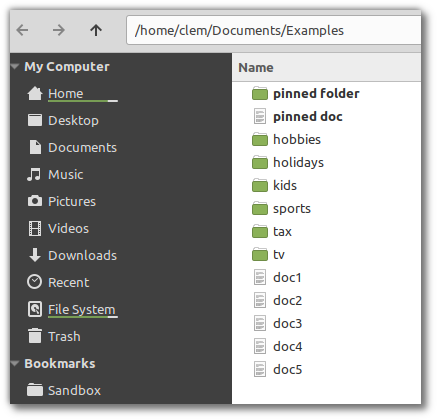

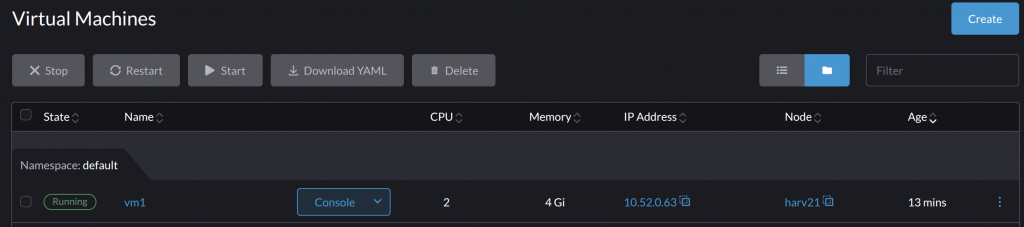

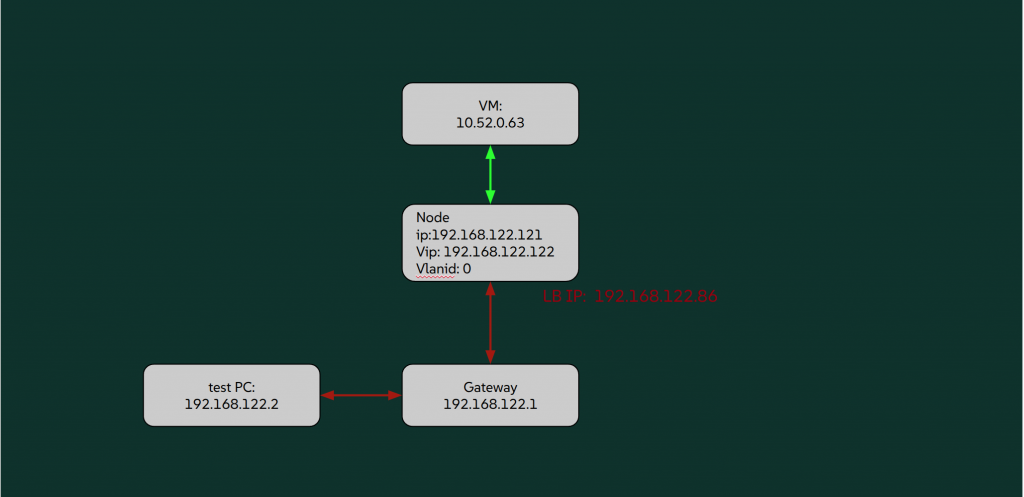

A simplified network diagram is shown below. For a more detailed network topology, see Network Topology.

Built-in management (mgmt) cluster network

Contents

After the cluster is installed, SUSE Virtualization sets up an internal built-in mgmt cluster network automatically. The mgmt is the base of Kubernetes cluster network.

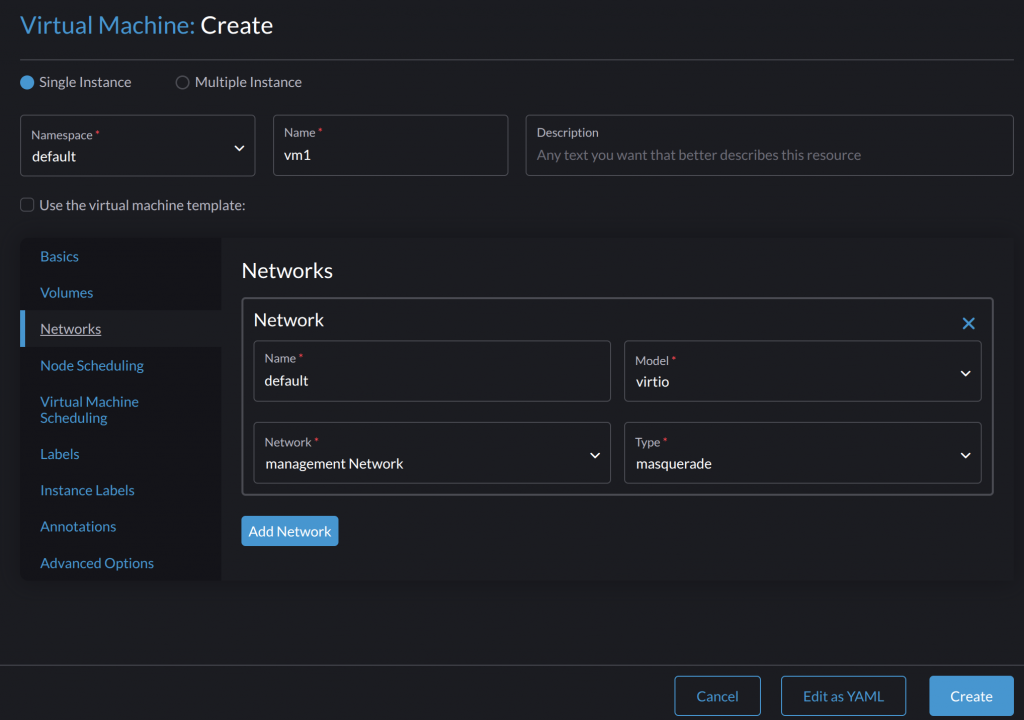

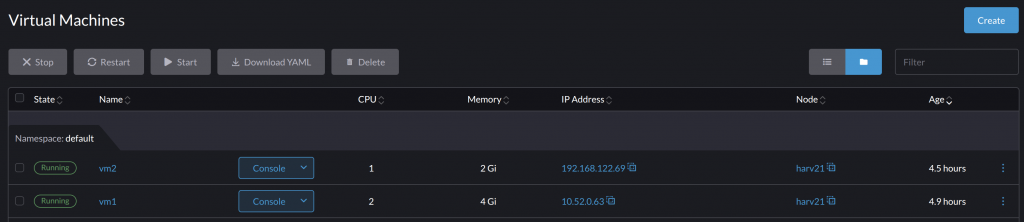

VM on the default Kubernetes cluster POD network

Create a new VM, vm1, on the default network. It does not require any additional configuration after the cluster is installed.

When vm1 is up, the SUSE Virtualization UI shows that the VM gets an IP address 10.52.0.63. This IP is from the aforementioned Kubernetes pod network.

vm1 can connect to external networks.

rancher@vm1:~$ ip addr

...

2: enp1s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc fq_codel state UP group default qlen 1000

link/ether ae:98:29:b3:44:32 brd ff:ff:ff:ff:ff:ff

inet 10.0.2.2/24 brd 10.0.2.255 scope global dynamic enp1s0

valid_lft 86313380sec preferred_lft 86313380sec

inet6 fe80::ac98:29ff:feb3:4432/64 scope link

valid_lft forever preferred_lft forever

rancher@vm1:~$ ping 8.8.8.8

PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

64 bytes from 8.8.8.8: icmp_seq=1 ttl=116 time=8.67 ms

However, vm1 can’t be reached directly from outside the cluster.

:::note

- As

vm1is using the POD IP, each time it is newly started/migrated to others, it gets a random IP. - The IP

10.0.2.2is shown insidevm1. And it is the same in all such kinds of VM.

:::

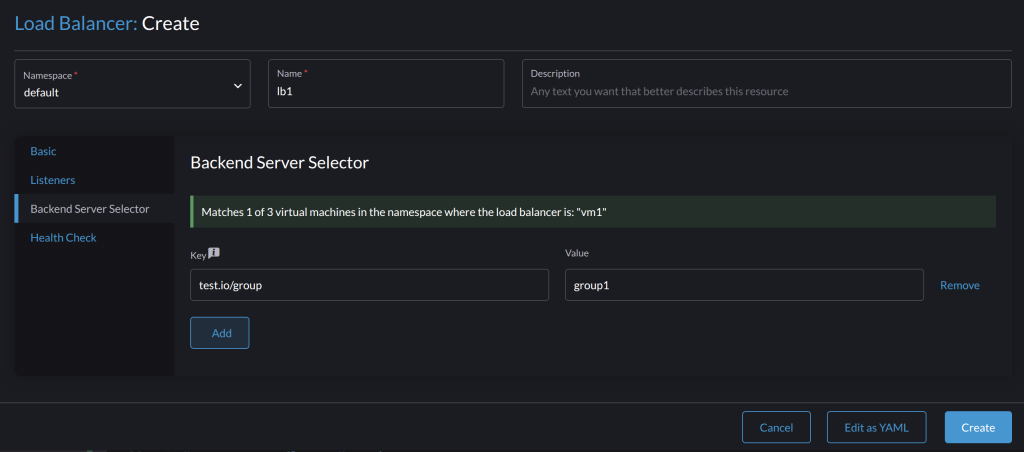

Load balancer for the VM

The SUSE Virtualization Load Balancer provides a solution to assign an externally accessible IP to vm1. This IP is fixed, no matter whether vm1 is restarted or migrated.

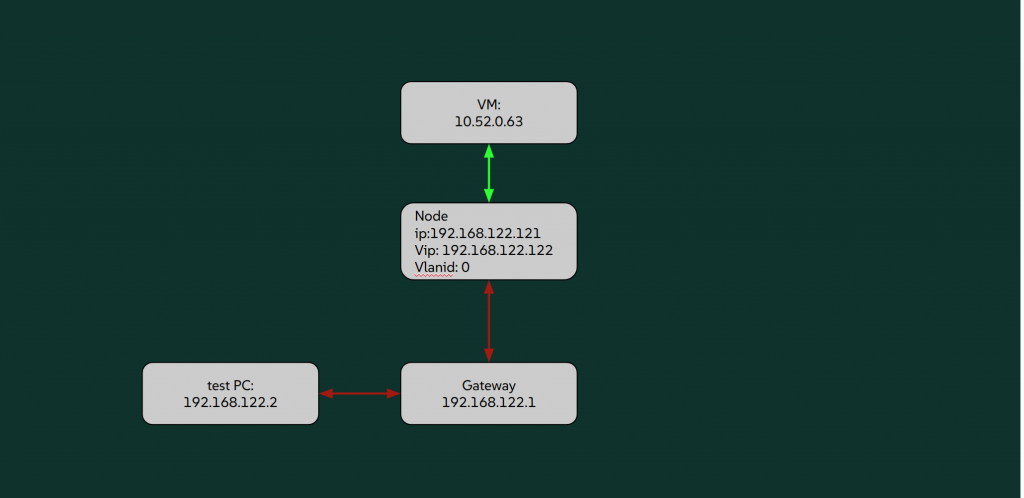

Create a load balancer lb1.

On the Backend Server Selector page, assign appropriate labels to the backend servers (virtual machine instances). The UI shows whether some servers can be selected.

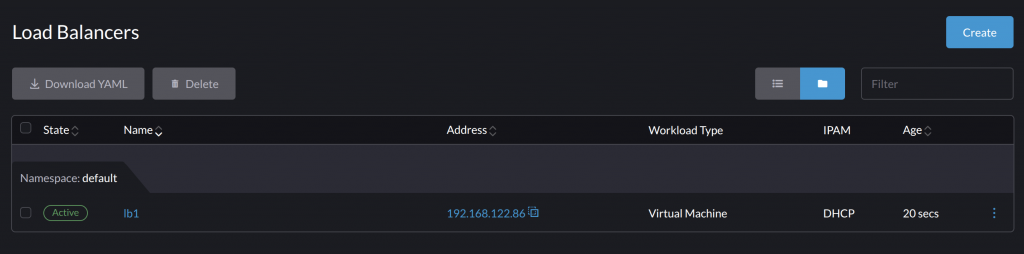

The lb1 becomes Active when it gets an IP from a DHCP server or IPPool and selects some backend servers.

The load balancer IP allows the VM to be exposed externally.

apiVersion: loadbalancer.harvesterhci.io/v1beta1

kind: LoadBalancer

metadata:

name: lb1

namespace: default

spec:

backendServerSelector:

test.io/group:

- group1

healthCheck: {}

ipam: dhcp

listeners:

- backendPort: 8000

name: http

port: 80

protocol: TCP

workloadType: vm

status:

address: 192.168.122.86

allocatedAddress:

ip: 0.0.0.0

backendServers:

- 10.52.0.63

conditions:

- lastUpdateTime: "2025-10-30T09:55:52Z"

status: "True"

type: Ready

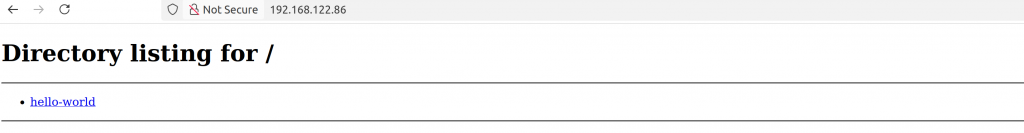

Start a simple HTTP server on vm1.

rancher@vm1:~/test$ python3 -m http.server

Serving HTTP on 0.0.0.0 port 8000 (http://0.0.0.0:8000/) ...

Access the LB IP from an HTTP browser. It is successful.

:::note

The IPPool can be used to plan fixed IPs for LBs.

:::

The out-of-the-box features are helpful for establishing basic network connectivity for VMs.

Customized VM Networks on mgmt

The VM can also get the external-reachable IP directly.

Untagged VM Network

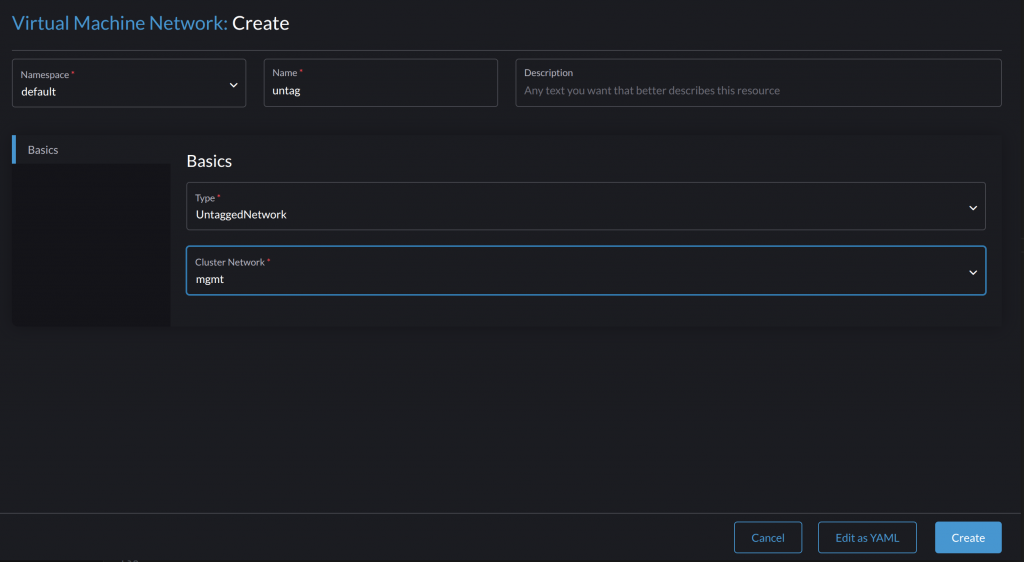

In the above example, the infrastructure network is in the traditional untagged mode. When the VM is intended to use the infrastructure directly, the Untagged VM Network is the right choice.

Create a Virtual Machine Network with Type:UntaggedNetwork.

Create vm2 attached to untag.

The successfully created vm2 gets an IP (192.168.122.69) from the subnet for the host node. The IP is directly reachable externally.

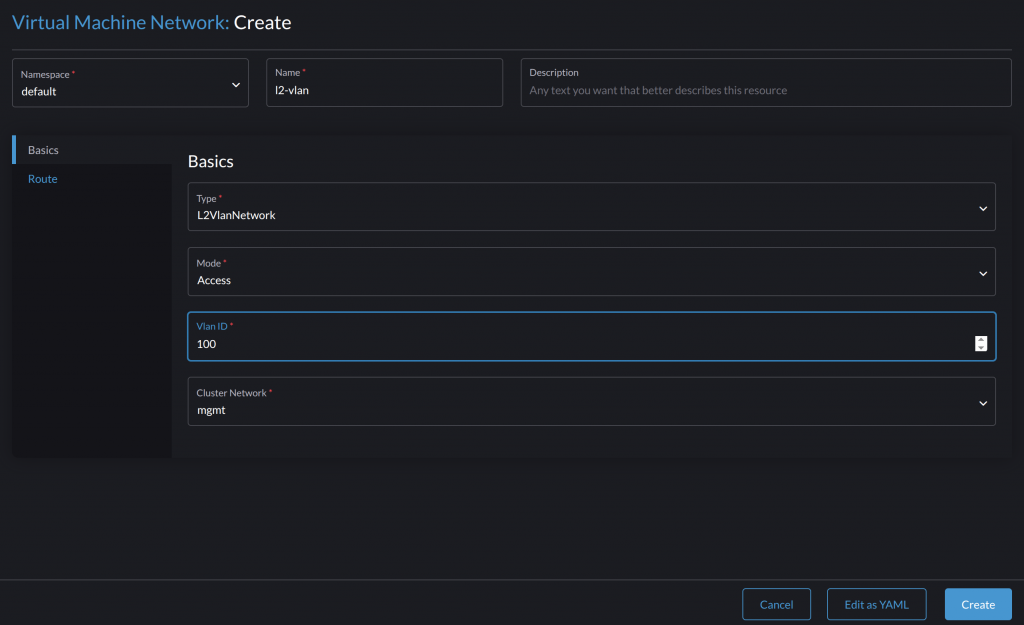

L2Vlan VM Network

It is a general practice that different network traffic is isolated. When the infrastructure network supports L2VLAN, SUSE Virtualization can benefit from it through the creation of multi-L2VLAN VM networks.

Create a Virtual Machine Network with type L2VlanNetwork.

Set the VLAN ID.

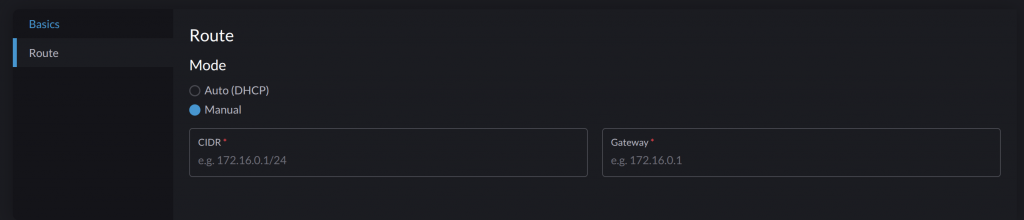

Set the Route mode to DHCP or Manual. In DHCP mode, SUSE Virtualization will try to request an IP to check if the related DHCP server is working correctly.

Create a VM that is attached to the new VM network. The following example VM is put to the Vlan 50 network.

:::note

- Inside the VM, the sent and received network packets do not have a

vlan tag, andL2 VLANis transparent to VMs. - VMs on different

L2VLAN VM networksare isolated by default.

:::

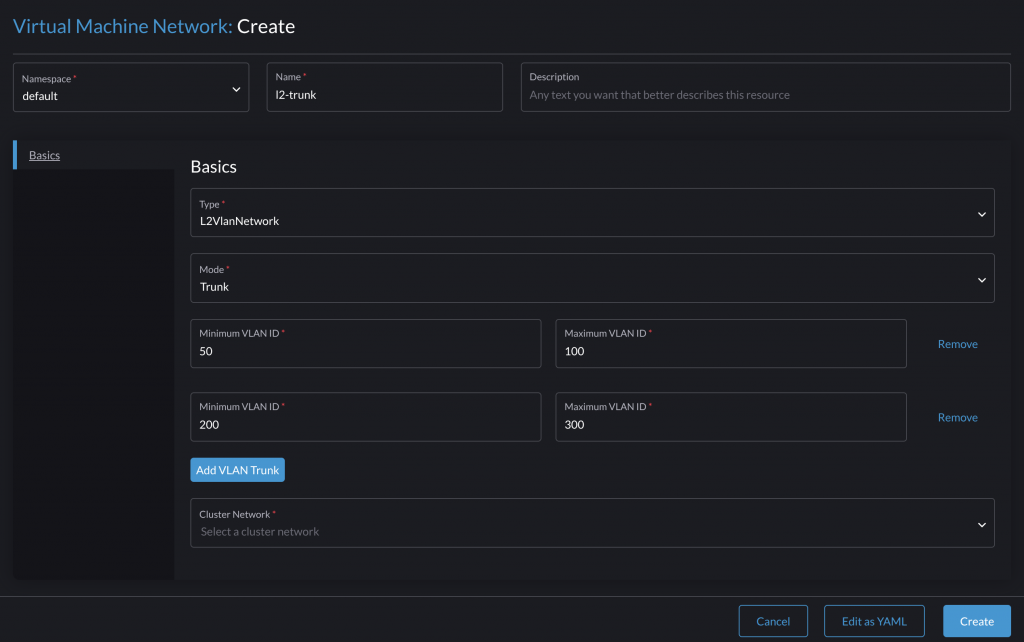

L2VlanTrunk VM Network

Available as of v1.7.0

Some applications expect to operate L2 VLANs directly from inside the VM. SUSE Virtualization supports this via the new VM Network type L2VlanTrunk.

Create a VM Network with Type:L2VlanNetwork Mode:Trunk, and set all allowed VIDs using the Add Vlan Trunk menu to add multiple blocks of VIDs.

When VMs are attached to this type of VM network, the OS and applications are responsible for adding and removing VLAN tags.

For instance, the vm-trunk gets two NICs, and the enp2s0 is attached to the trunk VM Network. Users can create sub-interfaces like enp2s0.50, enp2s0.100 and enp2s0.200 to work with VIDs 50, 100, 200. A network packet sent to sub-interface enp2s0.50 will get VID 50 added by the OS and vice versa.

rancher@vm-trunk:~$ ip link

...

3: enp2s0: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN mode DEFAULT group default qlen 1000

link/ether ca:91:01:1d:12:5f brd ff:ff:ff:ff:ff:ff

rancher@vm-trunk:~$ sudo -i ip link add link enp2s0 enp2s0.50 type vlan id 50 // Set sub-interface with vid 50

rancher@vm-trunk:~$ sudo -i ip addr add dev enp2s0.50 192.168.50.99/24

rancher@vm-trunk:~$ sudo -i ip link set enp2s0 up

rancher@vm-trunk:~$ sudo -i ip link set enp2s0.50 up

:::note

An example scenario is: run SUSE Virtualization on top of SUSE Virtualization. The host SUSE Virtualization cluster creates VMs which attach to the L2VlanTrunk VM Network. It passes network traffic that has the allowed VLAN tag.

On the VMs, a guest SUSE Virtualization cluster is installed. This cluster can also support its guest VMs to run on different L2VLAN VM Network. These L2VLAN VM Networks are backed by the host L2VlanTrunk VM Network.

:::

Overlay VM Network

Available as of v1.6.0

The Overlay VM Network is an amazing new feature on SUSE Virtualization. It will be introduced in another blog.

SUSE Virtualization Custom Cluster Network

In production deployments, the general practice is to further isolate management network traffic from data/workload network traffic. Harvester supports the flexible custom cluster network, which builds one or more dedicated network planes for purposed usage.

After the custom cluster network is built up, the settings and configurations are similar to above.

VMs can attach to these dedicated networks freely.

Additioanal

SUSE Virtualization Storage Network and VM Migration Network

SUSE Virtualization Storage Network aims to build a dedicated network for SUSE Virtualization default CSI driver: SUSE Storage (aka. Longhorn) to work with.

It is on top of SUSE Virtualization L2 VLAN VM Network.

The SUSE Virtualization VM Migration Network is similar, which aims to build a dedicated network for Virtual Machine Live Migration to use.

Conclusion

The versatile networking on SUSE Virtualization provide exceptional configuration flexibility, allowing users to effectively balance performance, traffic isolation, security, and other critical operational requirements across diverse use cases.

(Visited 1 times, 1 visits today)